Perceived Truths as Policy Paradoxes

The quote I was going to use to introduce this topic — “You’re entitled to your own opinion, but not to your own facts” — itself illustrates my theme for today: that truths are often less than well founded, and so can turn policy discussions weird.

The quote I was going to use to introduce this topic — “You’re entitled to your own opinion, but not to your own facts” — itself illustrates my theme for today: that truths are often less than well founded, and so can turn policy discussions weird.

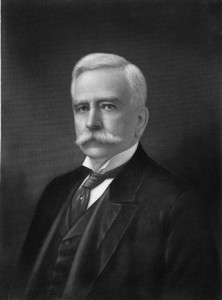

I’d always heard the quote attributed to Pat Moynihan, an influential sociologist who co-wrote Beyond the Melting Pot with Nathan Glazer, directed the MIT-Harvard Joint Center for Urban Studies shortly before I worked there (and left behind a closet full of Scotch, which stemmed from his perhaps apocryphal rule that no meeting extend beyond 4pm without a bottle on the table), and later served as a widely respected Senator from New York. The collective viziers of Wikipedia have found other attributions for the quote, however. (This has me once again looking for the source of “There go my people, I must go join them, for I am their leader,” supposedly Mahatma Gandhi but apparently some French general — but I digress.). The quote will need to stand on its own.

Here’s the Scott Jaschik item from Inside Higher Education that triggered today’s Rumination:

Here’s the Scott Jaschik item from Inside Higher Education that triggered today’s Rumination:

A new survey from ACT shows the continued gap between those who teach in high school and those who teach in college when it comes to their perceptions of the college preparation of today’s students. Nearly 90 percent of high school teachers told ACT that their students are either “well” or “very well” prepared for college-level work in their subject area after leaving their courses. But only 26 percent of college instructors reported that their incoming students are either “well” or “very well” prepared for first-year credit-bearing courses in their subject area. The percentages are virtually unchanged from a similar survey in 2009.

This is precisely what Moynihan (or whoever) had in mind: two parties to an important discussion each bearing their own data, and therefore unable to agree on the problem or how to address it. The teachers presumably think the professors have unreasonable expectations, or don’t work very hard to bring their students along; the professors presumably think the teachers aren’t doing their job. Each side therefore believes the problem lies on the other, and has data to prove that. Collaboration is unlikely, progress ditto. This is what Moynihan had observed about the federal social policy process.

The ACT survey reminded me of a similar finding that emerged back when I was doing college-choice research. I can’t locate a citation, but I recall hearing about a study that surveyed students who had been admitted to several different colleges.

The ACT survey reminded me of a similar finding that emerged back when I was doing college-choice research. I can’t locate a citation, but I recall hearing about a study that surveyed students who had been admitted to several different colleges.

The clever wrinkle in the study was that the students received several different survey queries, each purporting to be from one of the colleges to which he or she had been admitted, and each asking the student about the reasons for accepting or declining the admission offer. Here’s what they found: students told the institution they’d accepted that the reason was excellent academic quality, but they told the institutions they’d declined that the reason was better financial aid from the one they’d accepted.

More recently, I was talking to a colleague in a another media company who was concerned about the volume of copyright infringement on a local campus. According to the company, the campus was hosting a great deal of copyright infringementl, as measured by the volume of requests for infringing material being sent out by BitTorrent. But according to the campus, a scan of the campus network identified very few hosts running the peer-to-peer applications. The colleague thought the campus was blowing smoke, the campus thought the company’s statistics were wrong.

More recently, I was talking to a colleague in a another media company who was concerned about the volume of copyright infringement on a local campus. According to the company, the campus was hosting a great deal of copyright infringementl, as measured by the volume of requests for infringing material being sent out by BitTorrent. But according to the campus, a scan of the campus network identified very few hosts running the peer-to-peer applications. The colleague thought the campus was blowing smoke, the campus thought the company’s statistics were wrong.

Although these three examples seem similar — parties disagreeing about facts — in fact they’re a bit different.

- In the teacher/professor example, the different conclusions presumably stem from different (and unshared) definitions of “”prepared for college-level work”.

- In the accepted/decline example, the different explanations possibly stem from students’ not wanting to offend the declined institution by questioning its quality, or wanting think of their actual choice as good rather than cheap.

- In the infringement/application case, the different explanations stem from divergent metrics.

We’ve seen similar issues arise around institutional attributes in higher education. Do ratings like those from US News & World Report gather their own data, for example, or rely on presumably neutral sources such as the National Center for Educational Statistics? This is critical where results have major reputational effects — consider George Washington University’s inflation of class-rank admissions data, and similar earlier issues with Claremont McKenna, Emory, Villanova, and others.

We’ve seen similar issues arise around institutional attributes in higher education. Do ratings like those from US News & World Report gather their own data, for example, or rely on presumably neutral sources such as the National Center for Educational Statistics? This is critical where results have major reputational effects — consider George Washington University’s inflation of class-rank admissions data, and similar earlier issues with Claremont McKenna, Emory, Villanova, and others.

I’d been thinking about this because in my current job it’s quite important to understand patterns of copyright infringement on campuses. It would be good to figure out which campuses seem to have relatively low infringement rates, and to explore and document their policies and practices lest other campuses might benefit. For somewhat different reasons, it would be good to figure out which campuses seem to have relatively high infringement rates, so that they could be encouraged adopt different policies and practices.

But here we run into the accept/decline problem. If the point to data collection is to identify and celebrate effective practice, there are lots of incentives for campuses to participate. But if the point is to identify and pressure less effective campuses, the incentives are otherwise.

Compounding the problem, there are different ways to measure the problem:

- One can rely on externally generated complaints, whose volume can vary for reasons having nothing to do with the volume of infringement,

- one can rely on internal assessments of network traffic, which can be inadvertently selective, and/or

- one can rely on external measures such as the volume of queries to known sources of infringement;

I’m sure there are others — and that’s without getting into the religious wars about copyright, middlemen, and so forth I addressed in an earlier post).

There’s no full solution to this problem. But there are two things that help: collaboration and openness.

- By “collaboration,” I mean that parties to questions of policy or practice should work together to define and ideally collect data; that way, arguments can focus on substance.

- By “openness,” I mean that wherever possible raw data, perhaps anonymized, should accompany analysis and advocacy based on those data.

As an example what this means, here are some thoughts for one of my upcoming challenges — figuring out how to identify campuses that might be models for others to follow, and also campuses that should probably follow them. Achieving this is important, but improperly done it can easily come to resemble the “top 25” lists from RIAA and MPAA that became so controversial and counterproductive a few years ago. The “top 25” lists became controversial partly because their methodology was suspect, partly because the underlying data were never available, and partly because they ignored the other end of the continuum, that is, institutions that had somehow managed to elicit very few Digital Millennium Copyright Act (DMCA) notices.

It’s clear there are various sources of data, even without internal access to campus network data:

It’s clear there are various sources of data, even without internal access to campus network data:

- counts of DMCA notices sent by various copyright holders (some of which send notices methodically, following reasonably robust and consistent procedures, and some of which don’t),

- counts of queries involving major infringing sites, and/or

- network volume measures for major infringing protocols.

Those last two yield voluminous data, and so usually require sampling or data reduction of some kind. And not all queries or protocols they follow involve infringement. It’s also clear, from earlier studies, that there’s substantial variation in these counts over time and even across similar campuses.

This means it will be important for my database, if I can create one, to include several different measures, especially counts from different sources for different materials, and to do that over a reasonable period of time. Integrating all this into a single dataset will require lots of collaboration among the providers. Moreover, the raw data necessarily will identify individual institutions, and releasing them that way would probably cause more opposition than support. Clumping them all together would bypass that problem, but also cover up important variation. So it makes much more sense to disguise rather than clump — that is, to identify institutions by a code name and enough attributes to describe them but not to identify them.

It’ll then be important to be transparent: to lay out the detailed methodology used to “rank” campuses (as, for example, US News now does), and to share the disguised data so others can try different methodologies.

At a more general level, what I draw from the various examples is this: If organizations are to set policy and frame practice based on data — to become “data-driven organizations,” in the current parlance — then they must put serious effort into the source, quality, and accessibility of data. That’s especially true for “big data,” even though many current “big data” advocates wrongly believe that volume somehow compensates for quality.

At a more general level, what I draw from the various examples is this: If organizations are to set policy and frame practice based on data — to become “data-driven organizations,” in the current parlance — then they must put serious effort into the source, quality, and accessibility of data. That’s especially true for “big data,” even though many current “big data” advocates wrongly believe that volume somehow compensates for quality.

If we’re going to have productive debates about policy and practice in connection with copyright infringment or anything else, we need to listen to Moynihan: To have our own opinions, but to share our data.

Old joke. Someone writes a computer program (creates an app?) that translates from English into Russian (say) and vice versa. Works fine on simple stuff, so the next test is a a bit harder: “the spirit is willing, but the flesh is weak.” The program/app translates the phrase into Russian, then the tester takes the result, feeds it back into the program/app, and translates it back into English. Result: “The ghost is ready, but the meat is raw.”

Old joke. Someone writes a computer program (creates an app?) that translates from English into Russian (say) and vice versa. Works fine on simple stuff, so the next test is a a bit harder: “the spirit is willing, but the flesh is weak.” The program/app translates the phrase into Russian, then the tester takes the result, feeds it back into the program/app, and translates it back into English. Result: “The ghost is ready, but the meat is raw.” About a decade ago, several of us were at an Internet2 meeting. A senior Microsoft manager spoke about relations with higher education (although looking back, I can’t see why Microsoft would present at I2. Maybe it wasn’t an I2 meeting, but let’s just say it was — never let truth get in the way of a good story). At the time, instead of buying a copy of Office for each computer, as Microsoft licenses required, many students, staff, and faculty simply installed Microsoft Office on multiple machines from one purchased copy — or even copied the installation disks and passed them around. That may save money, but it’s copyright infringement, and illegal.

About a decade ago, several of us were at an Internet2 meeting. A senior Microsoft manager spoke about relations with higher education (although looking back, I can’t see why Microsoft would present at I2. Maybe it wasn’t an I2 meeting, but let’s just say it was — never let truth get in the way of a good story). At the time, instead of buying a copy of Office for each computer, as Microsoft licenses required, many students, staff, and faculty simply installed Microsoft Office on multiple machines from one purchased copy — or even copied the installation disks and passed them around. That may save money, but it’s copyright infringement, and illegal.

But at least one campus, which I’ll call Pi University, balked. The Campus Agreement, PiU’s golf-loving CIO pointed out, had a provision no one had read carefully: if PiU withdrew from the Campus Agreement, he said, it might be required to list and pay for all the software copies that PiU or its students, faculty, and staff had acquired under the Campus Agreement — that is, to buy what it had been renting. The PiU CIO said that he had no way to comply with such a provision, and that therefore PiU could not in good faith sign an agreement that included it.

But at least one campus, which I’ll call Pi University, balked. The Campus Agreement, PiU’s golf-loving CIO pointed out, had a provision no one had read carefully: if PiU withdrew from the Campus Agreement, he said, it might be required to list and pay for all the software copies that PiU or its students, faculty, and staff had acquired under the Campus Agreement — that is, to buy what it had been renting. The PiU CIO said that he had no way to comply with such a provision, and that therefore PiU could not in good faith sign an agreement that included it.

According to the

According to the  Over the past few years this changed, first gradually and then more abruptly.

Over the past few years this changed, first gradually and then more abruptly. The CIO from one campus — I’ll call it Omega University — discovered that a recent renewal of the bookstore contract provided that during the 15-year term of the contract, “the Bookstore shall be the University’s …exclusive seller of all required, recommended or suggested course materials, course packs and tools, as well as materials published or distributed electronically, or sold over the Internet.” The OmegaU CIO was outraged: “In my mind,” he wrote, “the terms exclusive and over the Internet can’t even be in the same sentence! And to restrict faculty use of technology for next 15 years is just insane.”

The CIO from one campus — I’ll call it Omega University — discovered that a recent renewal of the bookstore contract provided that during the 15-year term of the contract, “the Bookstore shall be the University’s …exclusive seller of all required, recommended or suggested course materials, course packs and tools, as well as materials published or distributed electronically, or sold over the Internet.” The OmegaU CIO was outraged: “In my mind,” he wrote, “the terms exclusive and over the Internet can’t even be in the same sentence! And to restrict faculty use of technology for next 15 years is just insane.”

Today, for example, the rapidly growing capability of small smartphones has taxed previously underused cellular networks. Earlier, excess capability in the wired Internet prompted innovation in major services like

Today, for example, the rapidly growing capability of small smartphones has taxed previously underused cellular networks. Earlier, excess capability in the wired Internet prompted innovation in major services like  Progress, convergence, and integration in information technology have driven dramatic and fundamental change in the information technologies faculty, students, colleges, and universities have. That progress is likely to continue.

Progress, convergence, and integration in information technology have driven dramatic and fundamental change in the information technologies faculty, students, colleges, and universities have. That progress is likely to continue. Everyone – or at least everyone between the ages of, say, 12 and 65 – has at least one authenticated online

Everyone – or at least everyone between the ages of, say, 12 and 65 – has at least one authenticated online  It’s striking how many of these assumptions were invalid even as recently as five years ago. Most of the assumptions were invalid a decade before that (and it’s sobering to remember that the “3M” workstation was a lofty goal as recently as 1980 and cost nearly $10,000 in the mid-1980s, yet today’s

It’s striking how many of these assumptions were invalid even as recently as five years ago. Most of the assumptions were invalid a decade before that (and it’s sobering to remember that the “3M” workstation was a lofty goal as recently as 1980 and cost nearly $10,000 in the mid-1980s, yet today’s  In colleges and universities, as in other organizations, information technology can promote progress by enabling administrative processes to become more efficient and by creating diverse, flexible pathways for communication and collaboration within and across different entities. That’s organizational technology, and although it’s very important, it affects higher education much the way it affects other organizations of comparable size.

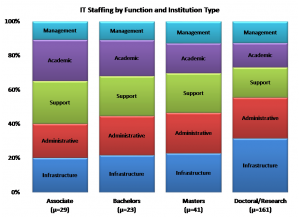

In colleges and universities, as in other organizations, information technology can promote progress by enabling administrative processes to become more efficient and by creating diverse, flexible pathways for communication and collaboration within and across different entities. That’s organizational technology, and although it’s very important, it affects higher education much the way it affects other organizations of comparable size. For example, by storing and distributing materials electronically, by enabling lectures and other events to be streamed or recorded, and by providing a medium for one-to-one or collective interactions among faculty and students, IT potentially expedites and extends traditional roles and transactions. Similarly, search engines and network-accessible library and reference materials vastly increase faculty and students access. The effect, although profound, nevertheless falls short of transformational. Chairs outside faculty doors give way to “learning management systems” like

For example, by storing and distributing materials electronically, by enabling lectures and other events to be streamed or recorded, and by providing a medium for one-to-one or collective interactions among faculty and students, IT potentially expedites and extends traditional roles and transactions. Similarly, search engines and network-accessible library and reference materials vastly increase faculty and students access. The effect, although profound, nevertheless falls short of transformational. Chairs outside faculty doors give way to “learning management systems” like  For example, the

For example, the  This most productively involves experience that otherwise might have been unaffordable, dangerous, or otherwise infeasible. Simulated chemistry laboratories and factories were an early example – students could learn to

This most productively involves experience that otherwise might have been unaffordable, dangerous, or otherwise infeasible. Simulated chemistry laboratories and factories were an early example – students could learn to  This is the most controversial application of learning technology – “Why do we need faculty to teach calculus on thousands of different campuses, when it can be taught online by a computer?” – but also one that drives most discussion of how technology might transform higher education. It has emerged especially for disciplines and topics where instructors convey what they know to students through classroom lectures, readings, and tutorials. PLATO (Programmed Logic for Automated Teaching Operations) emerged from the

This is the most controversial application of learning technology – “Why do we need faculty to teach calculus on thousands of different campuses, when it can be taught online by a computer?” – but also one that drives most discussion of how technology might transform higher education. It has emerged especially for disciplines and topics where instructors convey what they know to students through classroom lectures, readings, and tutorials. PLATO (Programmed Logic for Automated Teaching Operations) emerged from the  Sometimes a student gets all four together. For example, MIT marked me even before I enrolled as someone likely to play a role in technology (admission), taught me a great deal about science and engineering generally, electrical engineering in particular, and their social and economic context (instruction), documented through grades based on exams, lab work, and classroom participation that I had mastered (or failed to master) what I’d been taught (certification), and immersed me in an environment wherein data-based argument and rhetoric guided and advanced organizational life, and thereby helped me understand how to work effectively within organizations, groups, and society (socialization).

Sometimes a student gets all four together. For example, MIT marked me even before I enrolled as someone likely to play a role in technology (admission), taught me a great deal about science and engineering generally, electrical engineering in particular, and their social and economic context (instruction), documented through grades based on exams, lab work, and classroom participation that I had mastered (or failed to master) what I’d been taught (certification), and immersed me in an environment wherein data-based argument and rhetoric guided and advanced organizational life, and thereby helped me understand how to work effectively within organizations, groups, and society (socialization). Instruction is an especially fertile domain for technological progress. This is because three trends converge around it:

Instruction is an especially fertile domain for technological progress. This is because three trends converge around it: One problem with such a future is that socialization, a key function of higher education, gets lost. This points the way to one major technology challenge for the future: Developing online mechanisms, for students who are scattered across the nation or the world, that provide something akin to rich classroom and campus interaction. Such interaction is central to the success of, for example, elite liberal-arts colleges and major residential universities. Many advocates of distance education believe that social media such as Facebook groups can provide this socialization, but that potential has yet to be realized.

One problem with such a future is that socialization, a key function of higher education, gets lost. This points the way to one major technology challenge for the future: Developing online mechanisms, for students who are scattered across the nation or the world, that provide something akin to rich classroom and campus interaction. Such interaction is central to the success of, for example, elite liberal-arts colleges and major residential universities. Many advocates of distance education believe that social media such as Facebook groups can provide this socialization, but that potential has yet to be realized. Drivers headed for

Drivers headed for

“There are two possible solutions,” Hercule Poirot says to the assembled suspects in Murder on the Orient Express (that’s p. 304 in

“There are two possible solutions,” Hercule Poirot says to the assembled suspects in Murder on the Orient Express (that’s p. 304 in  The straightforward projection, analogous to Poirot’s simpler solution (an unknown stranger committed the crime, and escaped undetected), stems from projections how institutions themselves might address each of the IT domains as new services and devices become available, especially cloud-based services and consumer-based end-user devices. The core assumptions are that the important loci of decisions are intra-institutional, and that institutions make their own choices to maximize local benefit (or, in the economic terms I mentioned in

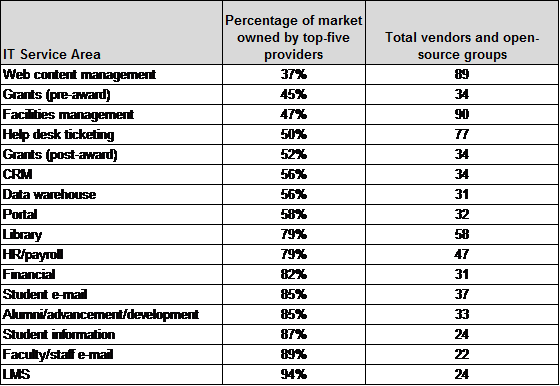

The straightforward projection, analogous to Poirot’s simpler solution (an unknown stranger committed the crime, and escaped undetected), stems from projections how institutions themselves might address each of the IT domains as new services and devices become available, especially cloud-based services and consumer-based end-user devices. The core assumptions are that the important loci of decisions are intra-institutional, and that institutions make their own choices to maximize local benefit (or, in the economic terms I mentioned in  One clear consequence of such straightforward evolution is a continuing need for central guidance and management across essentially the current array of IT domains. As I tried to suggest in

One clear consequence of such straightforward evolution is a continuing need for central guidance and management across essentially the current array of IT domains. As I tried to suggest in  If we think about the future unconventionally (as Poirot does in his second solution — spoiler in the last section below!), a somewhat more radical, extra-institutional projection emerges. What if Accenture, McKinsey, and Bain are right, and IT contributes very little to the distinctiveness of institutions — in which case colleges and universities have no business doing IT idiosyncratically or even individually?

If we think about the future unconventionally (as Poirot does in his second solution — spoiler in the last section below!), a somewhat more radical, extra-institutional projection emerges. What if Accenture, McKinsey, and Bain are right, and IT contributes very little to the distinctiveness of institutions — in which case colleges and universities have no business doing IT idiosyncratically or even individually? Despite changes in technology and economics, and some organizational evolution, higher education remains largely hierarchical. Vertically-organized colleges and universities grant degrees based on curricula largely determined internally, curricula largely comprise courses offered by the institution, institutions hire their own faculty to teach their own courses, and students enroll as degree candidates in a particular institution to take the courses that institution offers and thereby earn degrees. As

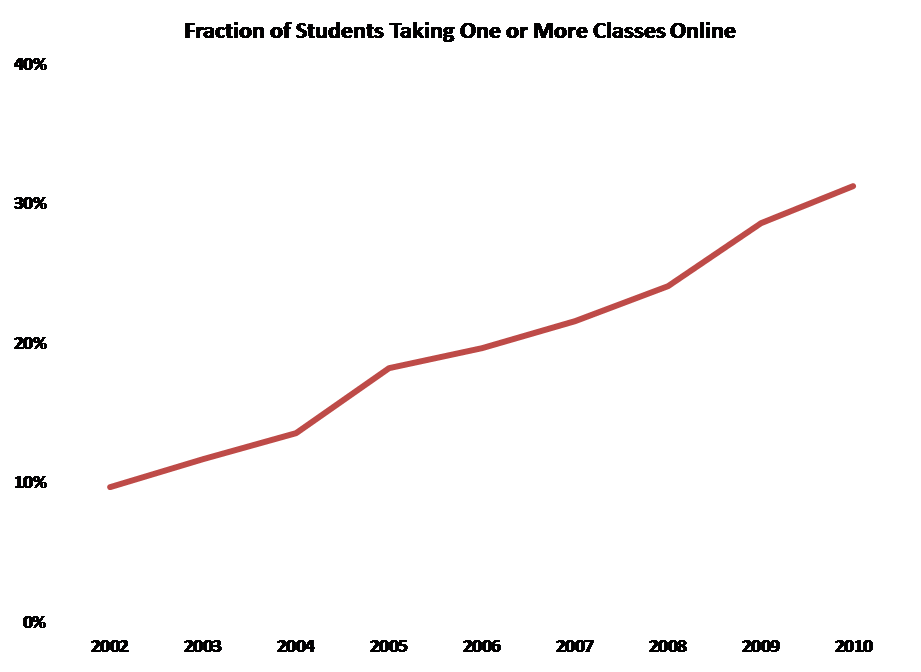

Despite changes in technology and economics, and some organizational evolution, higher education remains largely hierarchical. Vertically-organized colleges and universities grant degrees based on curricula largely determined internally, curricula largely comprise courses offered by the institution, institutions hire their own faculty to teach their own courses, and students enroll as degree candidates in a particular institution to take the courses that institution offers and thereby earn degrees. As  The first challenge, which is already being widely addressed in colleges, universities, and other entities, is distance education: how to deliver instruction and promote learning effectively at a distance. Some efforts to address this challenge involve extrapolating from current models (many community colleges, “laptop colleges”, and for-profit institutions are examples of this), some involve recycling existing materials (Open CourseWare, and to a large extent the Khan Academy), and some involve experimenting with radically different approaches such as game-based simulation. There has already been considerable success with effective distance education, and more seems likely in the near future.

The first challenge, which is already being widely addressed in colleges, universities, and other entities, is distance education: how to deliver instruction and promote learning effectively at a distance. Some efforts to address this challenge involve extrapolating from current models (many community colleges, “laptop colleges”, and for-profit institutions are examples of this), some involve recycling existing materials (Open CourseWare, and to a large extent the Khan Academy), and some involve experimenting with radically different approaches such as game-based simulation. There has already been considerable success with effective distance education, and more seems likely in the near future. As courses relate to curricula without depending on a particular institution, it becomes possible to imagine divorcing the offering of courses from the awarding of degrees. In this radical, no-longer-vertical future, some institutions might simply sell instruction and other learning resources, while others might concentrate on admitting students to candidacy, vetting their choices of and progress through coursework offered by other institutions, and awarding degrees. (Of course, some might try to continue both instructing and certifying.) To manage all this, it will clearly be necessary to gather, hold, and appraise student records in some shared or central fashion.

As courses relate to curricula without depending on a particular institution, it becomes possible to imagine divorcing the offering of courses from the awarding of degrees. In this radical, no-longer-vertical future, some institutions might simply sell instruction and other learning resources, while others might concentrate on admitting students to candidacy, vetting their choices of and progress through coursework offered by other institutions, and awarding degrees. (Of course, some might try to continue both instructing and certifying.) To manage all this, it will clearly be necessary to gather, hold, and appraise student records in some shared or central fashion. Poirot’s second solution to the Ratchett murder (everyone including the butler did it) requires astonishing and improbable synchronicity among a large number of widely dispersed individuals. That’s fine for a mystery novel, but rarely works out in real life.

Poirot’s second solution to the Ratchett murder (everyone including the butler did it) requires astonishing and improbable synchronicity among a large number of widely dispersed individuals. That’s fine for a mystery novel, but rarely works out in real life. Back in 1977,

Back in 1977,  Higher education traditionally has placed a high value on institutional individuality. Some years back a Harvard faculty colleague of mine,

Higher education traditionally has placed a high value on institutional individuality. Some years back a Harvard faculty colleague of mine,  At the same time, it has failed to make the critical distinction between what

At the same time, it has failed to make the critical distinction between what  The dilemma is this:

The dilemma is this: And so the $64 question: What would break this cycle? The answer is simple: sharing information, and committing to joint action. If the prisoners could communicate before deciding whether to defect or cooperate, their rational choice would be to cooperate. If colleges shared information about their plans and their deals, the likelihood of effective joint action would increase sharply. That would be good for the colleges and not so good for the vendor. From this perspective, it’s clear why non-disclosure clauses are so common in vendor contracts.

And so the $64 question: What would break this cycle? The answer is simple: sharing information, and committing to joint action. If the prisoners could communicate before deciding whether to defect or cooperate, their rational choice would be to cooperate. If colleges shared information about their plans and their deals, the likelihood of effective joint action would increase sharply. That would be good for the colleges and not so good for the vendor. From this perspective, it’s clear why non-disclosure clauses are so common in vendor contracts.