The Importance of Being Enterprise

…as Oscar Wilde well might have titled an essay about campus-wide IT, had there been such a thing back then.

Enterprise IT it accounts for the lion’s share of campus IT staffing, expenditure, and risk. Yet it receives curiously little attention in national discussion of IT’s strategic higher-education role. Perhaps that should change. Two questions arise:

- What does “Enterprise” mean within higher-education IT?

- Why might the importance of Enterprise IT evolve?

What does “Enterprise IT” mean?

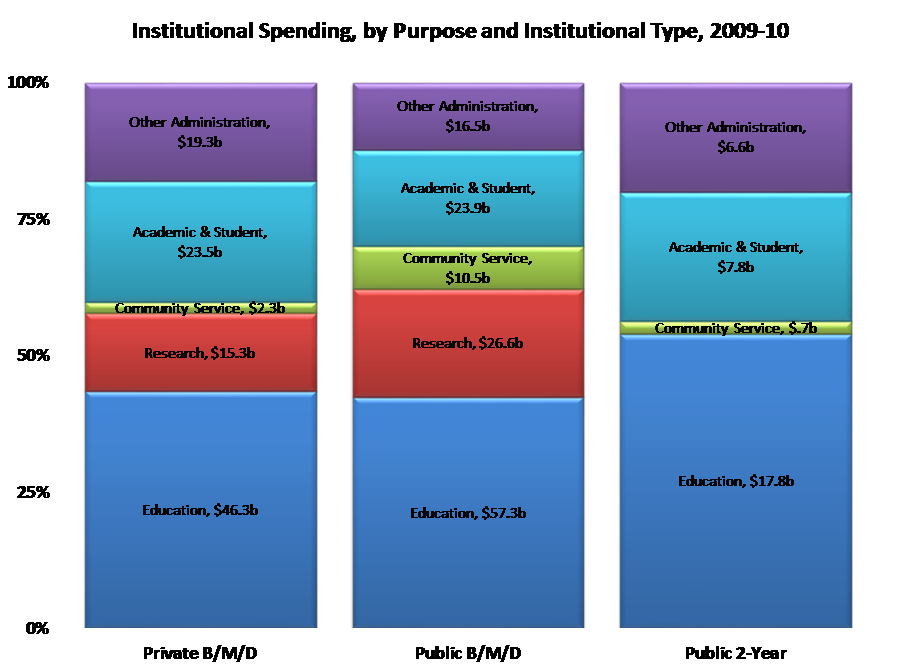

Here are some higher-education spending data from the federal Integrated Postsecondary Education Data Service (IPEDS), omitting hospitals, auxiliaries, and the like:

Broadly speaking, colleges and universities deploy resources with goals and purposes that relate to their substantive mission or the underlying instrumental infrastructure and administration.

- Substantive purposes and goals comprise some combination of education, research, and community service. These correspond to the bottom three categories in the IPEDS graph above. Few institutions focus predominantly on research—Rockefeller University, for example. Most research universities pursue all three missions, most community colleges emphasize the first and third, and most liberal-arts colleges focus on the first.

- Instrumental activities are those that equip, organize, and administer colleges and universities for optimal progress toward their mission—the top two categories in the IPEDS graph. In some cases, core activities advance institutional mission by providing a common infrastructure for the latter. In other cases, they do it by providing campus-wide or departmental staffing, management, and processes to expedite mission-oriented work. In still other cases, they do it through collaboration with other institutions or by contracting for outside services.

Education, research, and community service all use IT substantively to some extent. This includes technologies that directly or indirectly serve teaching and learning, technologies that directly enable research, and technologies that provide information and services to outside communities—for examples of all three, classroom technologies, learning management systems, technologies tailored to specific research data collection or analysis, research data repositories, library systems, and so forth.

Instrumental functions rely much more heavily on IT. Administrative processes rely increasingly on IT-based automation, standardization, and outsourcing. Mission-oriented IT applications share core infrastructure, services, and support. Core IT includes infrastructure such as networks and data centers, storage and computational clouds, and desktop and mobile devices; administrative systems ranging from financial, HR, student-record, and other back office systems to learning-management and library systems; and communications, messaging, collaboration, and social-media systems.

In a sense, then, there are six technology domains within college and university IT:

- the three substantive domains (education, research, and community service), and

- the three instrumental domains (infrastructure, administration, and communications).

Especially in the instrumental domains, “IT” includes not only technology, but also the services, support, and staffing associated with it. Each domain therefore has technology, service, support, and strategic components.

Based on this, here is a working definition: in in higher education,

“Enterprise” IT comprises the IT-related infrastructure, applications, services, and staff

whose primary institutional role is instrumental rather than substantive.

Exploring Enterprise IT, framed thus, entails focusing on technology, services, and support as they relate to campus IT infrastructure, administrative systems, and communications mechanisms, plus their strategic, management, and policy contexts.

Why Might the Importance of Enterprise IT Evolve?

Three reasons: magnitude, change, and overlap.

Magnitude

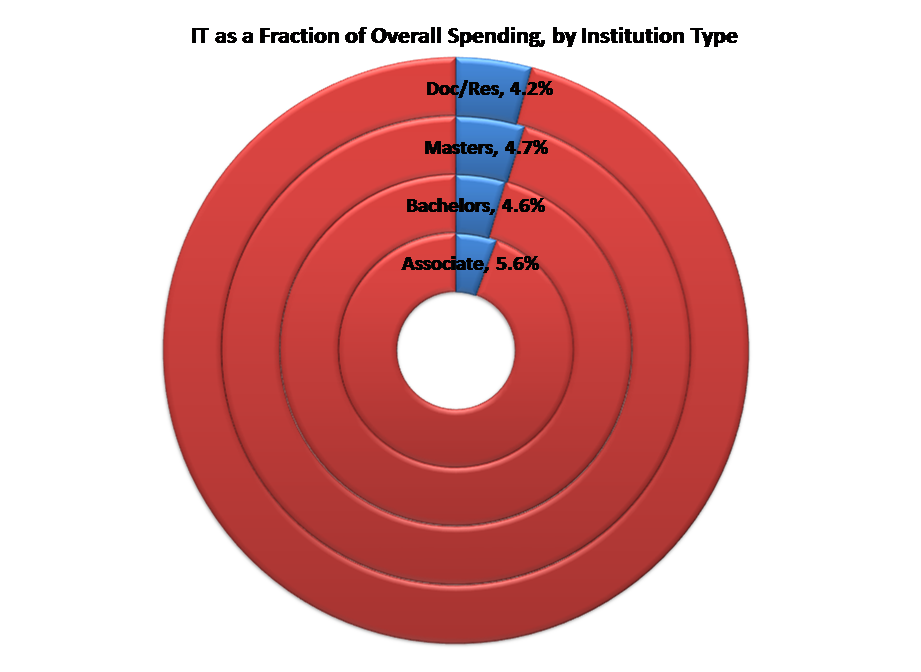

According data from EDUCAUSE’s Core Data Service (CDS) and the federal Integrated Postsecondary Data System (IPEDS), the typical college or university spends just shy of 5% of its operating budget on IT. This varies a bit across institutional types:

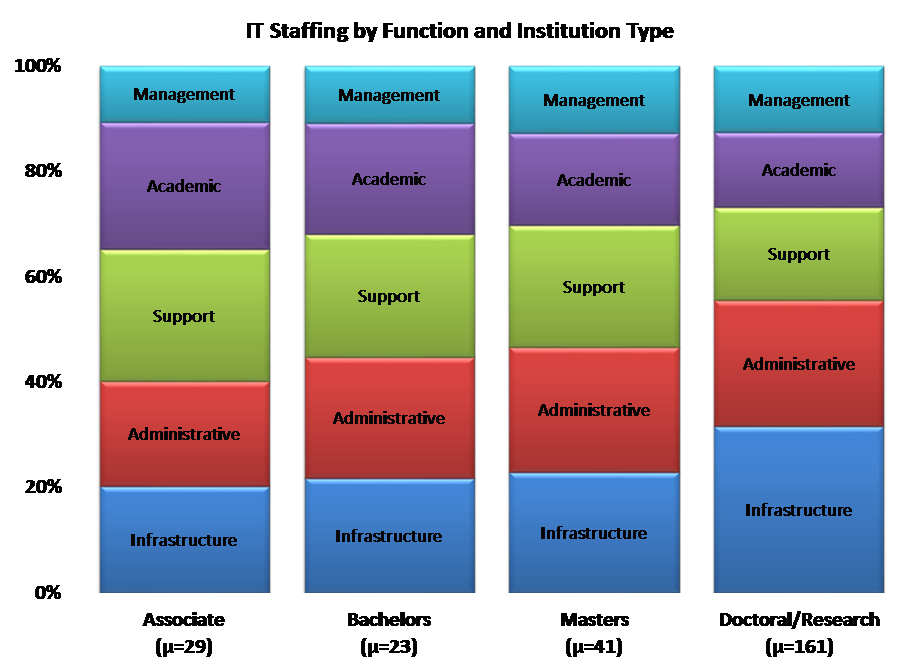

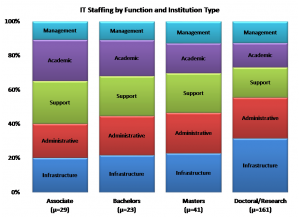

We lack good data breaking down IT expenditures further. However, we do have CDS data on how IT staff distribute across different IT functions. Here is a summary graph, combining education and research into “academic” (community service accounts for very little dedicated IT effort):

Thus my assertion above that Enterprise IT accounts for the lion’s share of IT staffing. Even if we omit the “Management” component, Enterprise IT comprises 60-70% of staffing including IT support, almost half without. The distribution is even more skewed for expenditure, since hardware, applications, services, and maintenance are disproportionately greater in Administration and Infrastructure.

Why, given the magnitude of Enterprise relative to other college and university IT, has it not been more prominent in strategic discussion? There are at least two explanations:

- relatively slow change in Enterprise IT, at least compared to other IT domains (rapidly-changing domains rightly receive more attention that stable ones), and

- overlap—if not competition—between higher-education and vendor initiatives in the Enterprise space.

Change

Enterprise IT is changing thematically, driven by mobility, cloud, and other fundamental changes in information technology. It also is changing specifically, as concrete challenges arise.

Consider, as one way to approach the former, these five thematic metamorphoses:

- In systems and applications, maintenance is giving way to renewal. At one time colleges and universities developed their own administrative systems, equipped their own data centers, and deployed their own networks. In-house development has given way to outside products and services installed and managed on campus, and more recently to the same products and services delivered in or from the cloud.

- In procurement and deployment, direct administration and operations are giving way to negotiation with outside providers and oversight of the resulting services. Whereas once IT staff needed to have intricate knowledge of how systems worked, today that can be less useful that effective negotiation, monitoring, and mediation.

- In data stewardship and archiving, segregated data and systems are giving way to integrated warehouses and tools. Historical data used to remain within administrative systems. The cost of keeping them “live” became too high, and so they moved to cheaper, less flexible, and even more compartmentalized media. The plunging price of storage and the emergence of sophisticated data warehouses and business-intelligence systems reversed this. Over time, storage-based barriers to data integration have gradually fallen.

- In management support, unidimensional reporting is giving way to multivariate analytics. Where once summary statistics emerged separately from different business domains, and drawing inferences about their interconnections required administrative experience and intuition, today connections can be made at the record level deep within integrated data warehouses. Speculating about relationships between trends is giving way to exploring the implications of documented correlations.

- In user support, authority is giving way to persuasion. Where once users had to accept institutional choices if they wanted IT support, today they choose their own devices, expect campus IT organizations to support them, and bypass central systems if support is not forthcoming. To maintain the security and integrity of core systems, IT staff can no longer simply require that users behave appropriately; rather, they must persuade users to do so. This means that IT staff increasingly become advocates rather than controllers. The required skillsets, processes, and administrative structures have been changing accordingly.

Beyond these broad thematic changes, a fourfold confluence is about to accelerate change in Enterprise IT: major systems approaching end-of-life, the growing importance of analytics, extensive mobility supported by third parties, and the availability of affordable, capable cloud-based infrastructure, services, and applications.

Systems Approaching End-of-Life

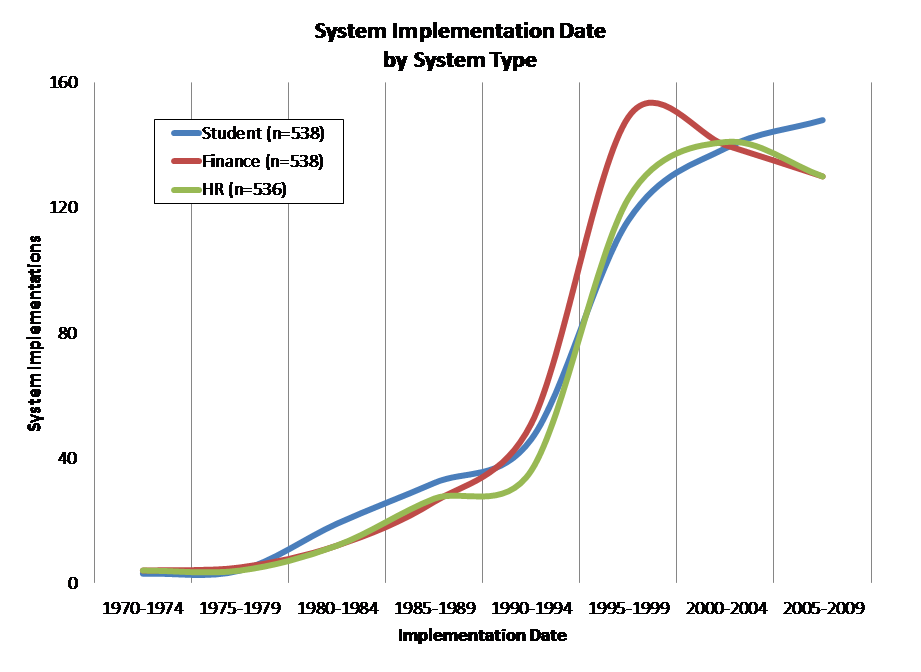

In the mid-1990s, many colleges and universities invested heavily in administrative-systems suites, often (if inaccurately) called “Enterprise Reporting and Planning” systems or “ERP.” Here, again drawing on CDS, are implementation data on Student, Finance, and HR/Payroll systems for non-specialized colleges and universities:

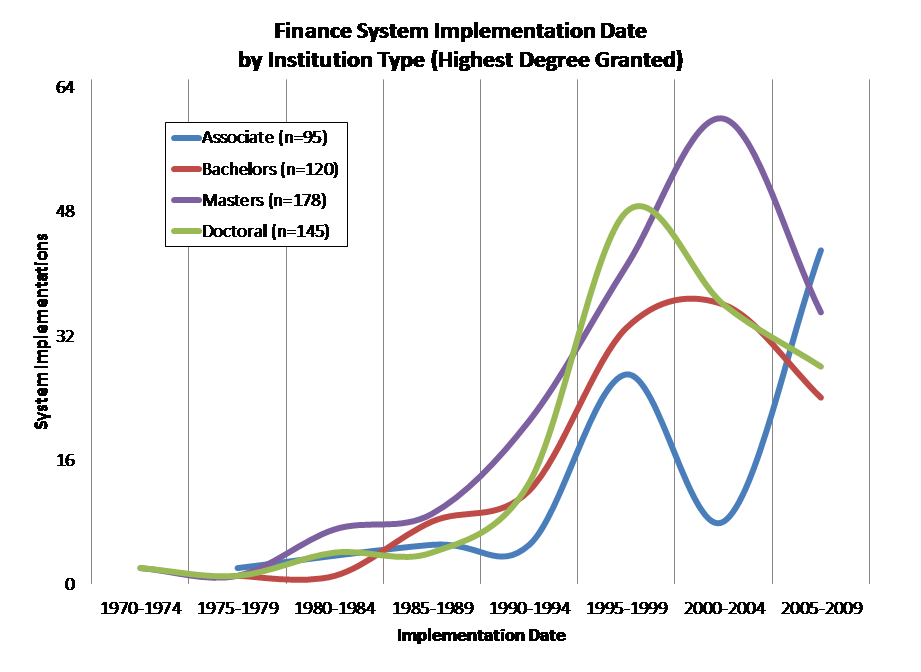

The pattern of implementation varies slightly across institution types. Here, for example, are implementation dates for Finance systems across four broad college and university groups:

Although these systems have generally been updated regularly since they were implemented, they are approaching the end of their functional life. That is, although they technically can operate into the future, the functionality of turn-of-the-century administrative systems likely falls short of what institutions currently require. Such functional obsolescence typically happens after about 20 years.

The general point holds across higher education: A great many administrative systems will reach their 20-year anniversaries over the next several years.

Moreover, many commercial administrative-systems providers end support for older products, even if those products have been maintained and updated. This typically happens as new products with different functionality and/or architecture establish themselves in the market.

These two milestones—functional obsolescence and loss of vendor support—mean that many institutions will be considering restructuring or replacement of their core administrative systems over the next few years. This, in turn, means that administrative-systems stability will give way to 1990s-style uncertainty and change.

Growing Importance of Analytics

Partly as a result of mid-1990s systems replacements, institutions have accumulated extensive historical data from their operations. They have complemented and integrated these by implementing flexible data-warehousing and business-intelligence systems.

Over the past decade, the increasing availability of sophisticated data-mining tools has given new purpose to data warehouses and business-intelligence systems that have until now have largely provided simple reports. This has laid foundation for the explosive growth of analytic management approaches (if, for the present, more rhetorical than real) in colleges and universities, and in the state and federal agencies that fund and/or regulate them.

As analytics become prominent in areas ranging from administrative planning to student feedback, administrative systems need to become better integrated across organizational units and data sources. The resulting datasets need to become much more widely accessible while complying with privacy requirements. Neither of these is easy to achieve. Achieving them together is more difficult still.

Mobility Supported by Third Parties

Until about five years ago campus communications—infrastructure and services both—were largely provided and controlled by institutions. This is no longer the case.

Much networking has moved from campus-provided wired and WiFi facilities to cellular and other connectivity provided by third parties, largely because those third parties also provide the mobile end-user devices students, faculty, and staff favor.

Separately, campus-provided email and collaboration systems have given way to “free” third-party email, productivity, and social-media services funded by advertising rather than institutional revenue. That mobile devices and their networking are largely outside campus control is triggering fundamental rethinking of instruction, assessment, identity, access, and security processes. This rethinking, in turn, is triggering re-engineering of core systems.

Affordable, Capable Cloud

Colleges and universities have long owned and managed IT themselves, based on two assumptions: that campus infrastructure needs are so idiosyncratic that they can only be satisfied internally, and that campuses are more sophisticated technologically than other organizations.

Both assumptions held well into the 1990s. That has changed. “Outside” technology has caught up to and surpassed campus technology, and campuses have gradually recognized and begun to avoid the costs of idiosyncrasy.

As a result, outside services ranging from commercially hosted applications to cloud infrastructure are rapidly supplanting campus-hosted services. This has profound implications for IT staffing—both levels and skillsets.

The upshot is that Enterprise, already the largest component of higher-education IT, is entering a period of dramatic change.

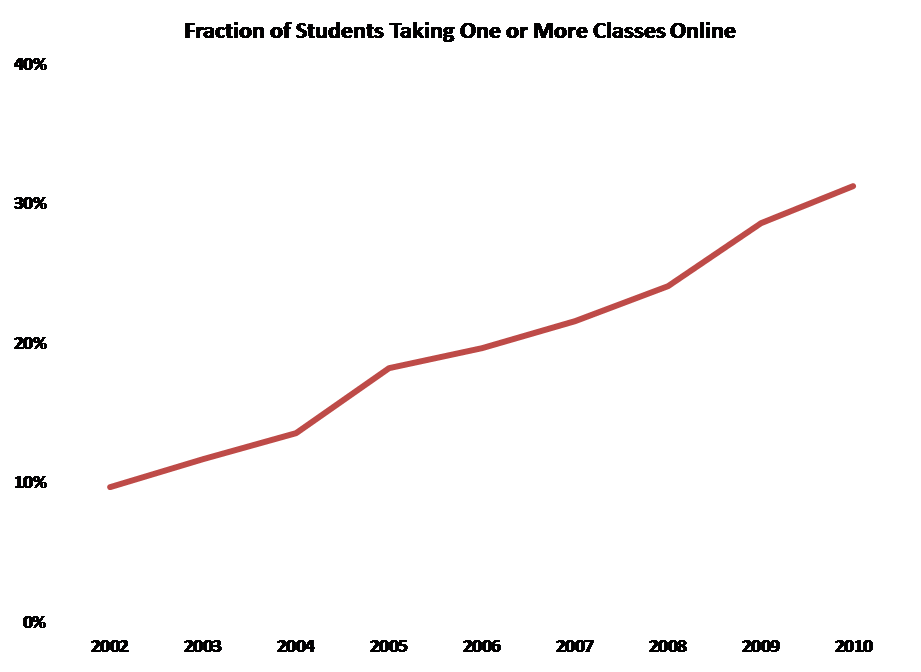

Beyond change in IT, the academy itself is evolving dramatically. For example, online enrollment is becoming increasingly common. As the Sloan Foundation reports, the fraction of students taking some or all of their coursework online is increasing steadily:

This has implications not only for pedagogy and learning environments, but also for the infrastructure and applications necessary to serve remote and mobile students.

Changes in the IT and academic enterprises are one reason Enterprise IT needs more attention. A second is the panoply of entities that try to influence Enterprise IT.

Overlap

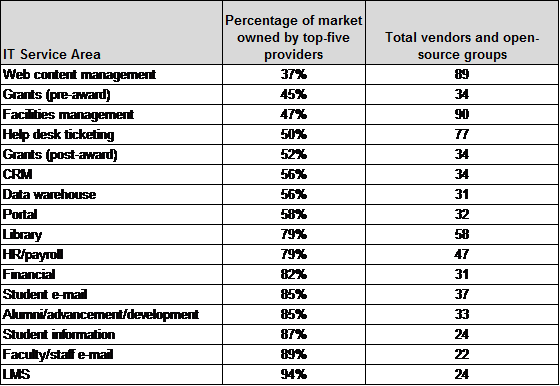

One might expect colleges and universities to have relatively consistent requirements for administrative systems, and therefore that the market for those would consist largely of a few major widely-used products. The facts are otherwise. Here are data from the recent EDUCAUSE Center for Applied Research (ECAR) research report The 2011 Enterprise Application Market in Higher Education:

The closest we come to a compact market is for learning management systems, where 94% of installed systems come from the top 5 vendors. Even in this area, however, there are 24 vendors and open-source groups. At the other extreme is web content management, where 89 active companies and groups compete and the top providers account for just over a third of the market.

One way major vendors compete under circumstances like these is by seeking entrée into the informal networks through which institutions share information and experiences. They do this, in many cases, by inviting campus CIOs or administrative-systems heads to join advisory groups or participate in vendor-sponsored conferences.

That these groups are usually more about promoting product than seeking strategic or technical advice is clear. They are typically hosted and managed by corporate marketing groups, not technical groups. In some cases the advisory groups comprise only a few members, in some cases they are quite large, and in a few cases there are various advisory tiers. CIOs from large colleges and universities are often invited to various such groups. For the most part these groups have very little effect on vendor marketing, and even less on technical architecture and direction.

So why do CIOs attend corporate advisory board meetings? The value to CIOs, aside from getting to know marketing heads, is that these groups’ meetings provide a venue for engaging enterprise issues with peers. The problem is that the number of meetings and their oddly overlapping memberships lead to scattershot conversations inevitably colored by the hosts’ marketing goals and technical choices. It is neither efficient nor effective for higher education to let vendors control discussions of Enterprise IT.

Before corporate advisory bodies became so prevalent, there were groups within higher-education IT that focused on Enterprise IT and especially on administrative systems and network infrastructure. Starting with 1950s workshops on the use of punch cards in higher education, CUMREC hosted meetings and publications focused on the business use of information technology. CAUSE emerged from CUMREC in the late 1960s, and remained focused on administrative systems. EDUCOM came into existence in the mid-1960s, and its focus evolved to complement those of CAUSE and CUMREC by addressing joint procurement, networking, academic technologies, copyright, and in general taking a broad, inclusive approach to IT. Within EDUCOM, the Net@EDU initiative focused on networking much the way CUMREC focused on business systems.

As these various groups melded into a few larger entities, especially EDUCAUSE, Enterprise IT remained a focus, but it was only one of many. Especially as the y2k challenge prompted increased attention to administrative systems and intensive communications demands prompted major investments in networking, the prominence of Enterprise IT issues in collective work diffused further. Internet2 became the focal point for networking engagements, and corporate advisory groups became the focal point for administrative-systems engagements. More recently, entities such as Gartner, the Chronicle of Higher Education, and edu1world have tried to become influential in the Enterprise IT space.

The results of the overlap among vendor groups and associations, unfortunately, are scattershot attention and dissipated energy in the higher-education Enterprise IT space. Neither serves higher education well. Overlap thus joins accelerated change as a major argument for refocusing and reenergizing Enterprise IT.

The Importance of Enterprise IT

Enterprise IT, through its emphasis on core institutional activities, is central to the success of higher education. Yet the community’s work in the domain has yet to coalesce into an effective whole. Perhaps this is because we have been extremely respectful of divergent traditions, communities, and past achievements.

We must not be disrespectful, but it is time to change this: to focus explicitly on what Enterprise IT needs in order to continue advancing higher education, to recognize its strategic importance, and to restore its prominence.

9/25/12 gj-a

Today, for example, the rapidly growing capability of small smartphones has taxed previously underused cellular networks. Earlier, excess capability in the wired Internet prompted innovation in major services like

Today, for example, the rapidly growing capability of small smartphones has taxed previously underused cellular networks. Earlier, excess capability in the wired Internet prompted innovation in major services like  Progress, convergence, and integration in information technology have driven dramatic and fundamental change in the information technologies faculty, students, colleges, and universities have. That progress is likely to continue.

Progress, convergence, and integration in information technology have driven dramatic and fundamental change in the information technologies faculty, students, colleges, and universities have. That progress is likely to continue. Everyone – or at least everyone between the ages of, say, 12 and 65 – has at least one authenticated online

Everyone – or at least everyone between the ages of, say, 12 and 65 – has at least one authenticated online  It’s striking how many of these assumptions were invalid even as recently as five years ago. Most of the assumptions were invalid a decade before that (and it’s sobering to remember that the “3M” workstation was a lofty goal as recently as 1980 and cost nearly $10,000 in the mid-1980s, yet today’s

It’s striking how many of these assumptions were invalid even as recently as five years ago. Most of the assumptions were invalid a decade before that (and it’s sobering to remember that the “3M” workstation was a lofty goal as recently as 1980 and cost nearly $10,000 in the mid-1980s, yet today’s  In colleges and universities, as in other organizations, information technology can promote progress by enabling administrative processes to become more efficient and by creating diverse, flexible pathways for communication and collaboration within and across different entities. That’s organizational technology, and although it’s very important, it affects higher education much the way it affects other organizations of comparable size.

In colleges and universities, as in other organizations, information technology can promote progress by enabling administrative processes to become more efficient and by creating diverse, flexible pathways for communication and collaboration within and across different entities. That’s organizational technology, and although it’s very important, it affects higher education much the way it affects other organizations of comparable size. For example, by storing and distributing materials electronically, by enabling lectures and other events to be streamed or recorded, and by providing a medium for one-to-one or collective interactions among faculty and students, IT potentially expedites and extends traditional roles and transactions. Similarly, search engines and network-accessible library and reference materials vastly increase faculty and students access. The effect, although profound, nevertheless falls short of transformational. Chairs outside faculty doors give way to “learning management systems” like

For example, by storing and distributing materials electronically, by enabling lectures and other events to be streamed or recorded, and by providing a medium for one-to-one or collective interactions among faculty and students, IT potentially expedites and extends traditional roles and transactions. Similarly, search engines and network-accessible library and reference materials vastly increase faculty and students access. The effect, although profound, nevertheless falls short of transformational. Chairs outside faculty doors give way to “learning management systems” like  For example, the

For example, the  This most productively involves experience that otherwise might have been unaffordable, dangerous, or otherwise infeasible. Simulated chemistry laboratories and factories were an early example – students could learn to

This most productively involves experience that otherwise might have been unaffordable, dangerous, or otherwise infeasible. Simulated chemistry laboratories and factories were an early example – students could learn to  This is the most controversial application of learning technology – “Why do we need faculty to teach calculus on thousands of different campuses, when it can be taught online by a computer?” – but also one that drives most discussion of how technology might transform higher education. It has emerged especially for disciplines and topics where instructors convey what they know to students through classroom lectures, readings, and tutorials. PLATO (Programmed Logic for Automated Teaching Operations) emerged from the

This is the most controversial application of learning technology – “Why do we need faculty to teach calculus on thousands of different campuses, when it can be taught online by a computer?” – but also one that drives most discussion of how technology might transform higher education. It has emerged especially for disciplines and topics where instructors convey what they know to students through classroom lectures, readings, and tutorials. PLATO (Programmed Logic for Automated Teaching Operations) emerged from the  Sometimes a student gets all four together. For example, MIT marked me even before I enrolled as someone likely to play a role in technology (admission), taught me a great deal about science and engineering generally, electrical engineering in particular, and their social and economic context (instruction), documented through grades based on exams, lab work, and classroom participation that I had mastered (or failed to master) what I’d been taught (certification), and immersed me in an environment wherein data-based argument and rhetoric guided and advanced organizational life, and thereby helped me understand how to work effectively within organizations, groups, and society (socialization).

Sometimes a student gets all four together. For example, MIT marked me even before I enrolled as someone likely to play a role in technology (admission), taught me a great deal about science and engineering generally, electrical engineering in particular, and their social and economic context (instruction), documented through grades based on exams, lab work, and classroom participation that I had mastered (or failed to master) what I’d been taught (certification), and immersed me in an environment wherein data-based argument and rhetoric guided and advanced organizational life, and thereby helped me understand how to work effectively within organizations, groups, and society (socialization). Instruction is an especially fertile domain for technological progress. This is because three trends converge around it:

Instruction is an especially fertile domain for technological progress. This is because three trends converge around it: One problem with such a future is that socialization, a key function of higher education, gets lost. This points the way to one major technology challenge for the future: Developing online mechanisms, for students who are scattered across the nation or the world, that provide something akin to rich classroom and campus interaction. Such interaction is central to the success of, for example, elite liberal-arts colleges and major residential universities. Many advocates of distance education believe that social media such as Facebook groups can provide this socialization, but that potential has yet to be realized.

One problem with such a future is that socialization, a key function of higher education, gets lost. This points the way to one major technology challenge for the future: Developing online mechanisms, for students who are scattered across the nation or the world, that provide something akin to rich classroom and campus interaction. Such interaction is central to the success of, for example, elite liberal-arts colleges and major residential universities. Many advocates of distance education believe that social media such as Facebook groups can provide this socialization, but that potential has yet to be realized. Drivers headed for

Drivers headed for  “There are two possible solutions,” Hercule Poirot says to the assembled suspects in Murder on the Orient Express (that’s p. 304 in

“There are two possible solutions,” Hercule Poirot says to the assembled suspects in Murder on the Orient Express (that’s p. 304 in  The straightforward projection, analogous to Poirot’s simpler solution (an unknown stranger committed the crime, and escaped undetected), stems from projections how institutions themselves might address each of the IT domains as new services and devices become available, especially cloud-based services and consumer-based end-user devices. The core assumptions are that the important loci of decisions are intra-institutional, and that institutions make their own choices to maximize local benefit (or, in the economic terms I mentioned in

The straightforward projection, analogous to Poirot’s simpler solution (an unknown stranger committed the crime, and escaped undetected), stems from projections how institutions themselves might address each of the IT domains as new services and devices become available, especially cloud-based services and consumer-based end-user devices. The core assumptions are that the important loci of decisions are intra-institutional, and that institutions make their own choices to maximize local benefit (or, in the economic terms I mentioned in  One clear consequence of such straightforward evolution is a continuing need for central guidance and management across essentially the current array of IT domains. As I tried to suggest in

One clear consequence of such straightforward evolution is a continuing need for central guidance and management across essentially the current array of IT domains. As I tried to suggest in  If we think about the future unconventionally (as Poirot does in his second solution — spoiler in the last section below!), a somewhat more radical, extra-institutional projection emerges. What if Accenture, McKinsey, and Bain are right, and IT contributes very little to the distinctiveness of institutions — in which case colleges and universities have no business doing IT idiosyncratically or even individually?

If we think about the future unconventionally (as Poirot does in his second solution — spoiler in the last section below!), a somewhat more radical, extra-institutional projection emerges. What if Accenture, McKinsey, and Bain are right, and IT contributes very little to the distinctiveness of institutions — in which case colleges and universities have no business doing IT idiosyncratically or even individually? Despite changes in technology and economics, and some organizational evolution, higher education remains largely hierarchical. Vertically-organized colleges and universities grant degrees based on curricula largely determined internally, curricula largely comprise courses offered by the institution, institutions hire their own faculty to teach their own courses, and students enroll as degree candidates in a particular institution to take the courses that institution offers and thereby earn degrees. As

Despite changes in technology and economics, and some organizational evolution, higher education remains largely hierarchical. Vertically-organized colleges and universities grant degrees based on curricula largely determined internally, curricula largely comprise courses offered by the institution, institutions hire their own faculty to teach their own courses, and students enroll as degree candidates in a particular institution to take the courses that institution offers and thereby earn degrees. As  The first challenge, which is already being widely addressed in colleges, universities, and other entities, is distance education: how to deliver instruction and promote learning effectively at a distance. Some efforts to address this challenge involve extrapolating from current models (many community colleges, “laptop colleges”, and for-profit institutions are examples of this), some involve recycling existing materials (Open CourseWare, and to a large extent the Khan Academy), and some involve experimenting with radically different approaches such as game-based simulation. There has already been considerable success with effective distance education, and more seems likely in the near future.

The first challenge, which is already being widely addressed in colleges, universities, and other entities, is distance education: how to deliver instruction and promote learning effectively at a distance. Some efforts to address this challenge involve extrapolating from current models (many community colleges, “laptop colleges”, and for-profit institutions are examples of this), some involve recycling existing materials (Open CourseWare, and to a large extent the Khan Academy), and some involve experimenting with radically different approaches such as game-based simulation. There has already been considerable success with effective distance education, and more seems likely in the near future. As courses relate to curricula without depending on a particular institution, it becomes possible to imagine divorcing the offering of courses from the awarding of degrees. In this radical, no-longer-vertical future, some institutions might simply sell instruction and other learning resources, while others might concentrate on admitting students to candidacy, vetting their choices of and progress through coursework offered by other institutions, and awarding degrees. (Of course, some might try to continue both instructing and certifying.) To manage all this, it will clearly be necessary to gather, hold, and appraise student records in some shared or central fashion.

As courses relate to curricula without depending on a particular institution, it becomes possible to imagine divorcing the offering of courses from the awarding of degrees. In this radical, no-longer-vertical future, some institutions might simply sell instruction and other learning resources, while others might concentrate on admitting students to candidacy, vetting their choices of and progress through coursework offered by other institutions, and awarding degrees. (Of course, some might try to continue both instructing and certifying.) To manage all this, it will clearly be necessary to gather, hold, and appraise student records in some shared or central fashion. Poirot’s second solution to the Ratchett murder (everyone including the butler did it) requires astonishing and improbable synchronicity among a large number of widely dispersed individuals. That’s fine for a mystery novel, but rarely works out in real life.

Poirot’s second solution to the Ratchett murder (everyone including the butler did it) requires astonishing and improbable synchronicity among a large number of widely dispersed individuals. That’s fine for a mystery novel, but rarely works out in real life.