Note to prospective readers: This post has evolved, through extensive revision and expansion and more careful citation, into a paper available at http://gjackson.us/it-he.pdf.

You might want to read that paper, which is much better and complete, instead of this post — unless you like the pictures here, which for the moment aren’t in the paper. Even if you read this to see the pictures, please go read the other.

“Which way to Millinocket?,” a traveler asks. “Well, you can go west to the next intersection…” the drawling down-east Mainer replies in the Dodge and Bryan story,

“…get onto the turnpike, go north through the toll gate at Augusta, ’til you come to that intersection…. well, no. You keep right on this tar road; it changes to dirt now and again. Just keep the river on your left. You’ll come to a crossroads and… let me see. Then again, you can take that scenic coastal route that the tourists use. And after you get to Bucksport… well, let me see now. Millinocket. Come to think of it, you can’t get there from here.”

PLATO and its programmed-instruction kin were supposed to transform higher education. So were the Apple II, and then the personal computer – PC and then Mac – and then the “3M” workstation (megapixel display, megabyte memory, megaflop speed) for which Project Athena was designed. So were simulated laboratories, so were BITNET and then the Internet, so were MUDs, so was Internet2, so was artificial intelligence, so was supercomputing.

PLATO and its programmed-instruction kin were supposed to transform higher education. So were the Apple II, and then the personal computer – PC and then Mac – and then the “3M” workstation (megapixel display, megabyte memory, megaflop speed) for which Project Athena was designed. So were simulated laboratories, so were BITNET and then the Internet, so were MUDs, so was Internet2, so was artificial intelligence, so was supercomputing.

Each of these most certainly has helped higher education grow, evolve, and gain efficiency and flexibility. But at its core, higher education remains very much unchanged. That may no longer suffice.

What about today’s technological changes and initiatives – social media, streaming video, multi-user virtual environments, mobile devices, the cloud? Are they to be evolutionary, or transformational? If higher education needs the latter, can we get there from here?

It’s important to start conversations about questions like these from a common understanding of information technologies that currently play a role in higher education, what that role is, and how technologies and their roles are progressing. That’s what prompted these musings.

Information Technology

For the most part, “information technology” means a tripartite array of hardware and software:

- end-user devices, which today range from large desktop workstations to small mobile phones, typically with some kind of display, some way to make choices and enter text, and various other capabilities variously enabled by hardware and software;

- servers, which comprise not just racks of processors, storage, and other hardware but rather are aggregations of hardware, software, applications, and data that provide services to multiple users (when the aggregation is elsewhere, it’s often called “the cloud” today); and

- networks, wireless or wired, which interlink local servers, remote server clouds, and end-user devices, and which typically comprise copper and glass cabling, routers and switches and optronics, and network operating system plus some authentication and logging capability.

Information technology tends to progress rapidly but unevenly, with progress or shortcomings in one domain driving or retarding progress in others.

Today, for example, the rapidly growing capability of small smartphones has taxed previously underused cellular networks. Earlier, excess capability in the wired Internet prompted innovation in major services like Google and YouTube. The success of Google and Amazon forced innovation in the design, management, and physical location of servers.

Today, for example, the rapidly growing capability of small smartphones has taxed previously underused cellular networks. Earlier, excess capability in the wired Internet prompted innovation in major services like Google and YouTube. The success of Google and Amazon forced innovation in the design, management, and physical location of servers.

Perhaps the most striking aspects of technological progress have been its convergence and integration. Whereas once one could reasonably think separately about servers, networks, and end-user devices, today the three are not only tightly interconnected and interdependent, but increasingly their components are indistinguishable. Network switches are essentially servers, servers often comprise vast arrays of the same processors that drive end-user devices plus internal networks, and end-user devices readily tackle tasks – voice recognition, for example – that once required massive servers.

Access to Information Technology

Progress, convergence, and integration in information technology have driven dramatic and fundamental change in the information technologies faculty, students, colleges, and universities have. That progress is likely to continue.

Progress, convergence, and integration in information technology have driven dramatic and fundamental change in the information technologies faculty, students, colleges, and universities have. That progress is likely to continue.

Here, as a result, are some assumptions we can reasonably make today:

- Households have some level of broadband access to the Internet, and at least one computer capable of using that broadband access to view and interact with Web pages, handle email and other messaging, listen to audio, and view videos of at least YouTube quality .

- Teenagers and most adults have some kind of mobile phone, and that phone usually has the capability to handle routine Internet tasks like viewing Web pages and reading email.

- Colleges and universities have building and campus networks operating at broadband speeds of at least 10Mb/sec, and most have wireless networks operating at 802.11b (11Mb/sec) or greater speed.

- Server capacity has become quite inexpensive, largely because “cloud” providers have figured out how to gain and then sell economy of scale.

Everyone – or at least everyone between the ages of, say, 12 and 65 – has at least one authenticated online identity, including email and other online service accounts; Facebook, Twitter, Google, or other social-media accounts; online banking, financial, or credit-card access; or network credentials from a school, college or university, or employer.

Everyone – or at least everyone between the ages of, say, 12 and 65 – has at least one authenticated online identity, including email and other online service accounts; Facebook, Twitter, Google, or other social-media accounts; online banking, financial, or credit-card access; or network credentials from a school, college or university, or employer.- Everyone knows how to search on the Internet for material using Google, Bing, or other search engines.

- Most people have a digital camera, perhaps integrated into their phone and capable of both still photos and videos, and they know how to send them to others or offload their photos onto their computers or an online service.

- Most college and university course materials are in electronic form, and so is a large fraction of library and reference material used by the typical student.

- Most colleges and universities have readily available facilities for creating video from lectures and similarly didactic events, whether in classrooms or in other venues, and for streaming or otherwise making that video available online.

It’s striking how many of these assumptions were invalid even as recently as five years ago. Most of the assumptions were invalid a decade before that (and it’s sobering to remember that the “3M” workstation was a lofty goal as recently as 1980 and cost nearly $10,000 in the mid-1980s, yet today’s iPhone almost exceeds the 3M spec).

It’s striking how many of these assumptions were invalid even as recently as five years ago. Most of the assumptions were invalid a decade before that (and it’s sobering to remember that the “3M” workstation was a lofty goal as recently as 1980 and cost nearly $10,000 in the mid-1980s, yet today’s iPhone almost exceeds the 3M spec).

Looking a bit into the future, here are some further assumptions that probably will be safe:

- Typical home networking and computers will have improved to the point they can handle streamed video and simple two-way video interactions (which means that at least one home computer will have an add-on or built-in camera).

- Most people will know how to communicate with individuals or small groups online through synchronous social media or messaging environments, in many cases involving video.

- Authentication and monitoring technologies will exist to enable colleges and universities to reasonably ensure that their testing and assessment of student progress is protected from fraud.

- Pretty much everyone will have the devices and accounts necessary for ubiquitous connectivity with anybody else and to use services from almost any college, university, or other educational provider.

Technology, Teaching, and Learning

In colleges and universities, as in other organizations, information technology can promote progress by enabling administrative processes to become more efficient and by creating diverse, flexible pathways for communication and collaboration within and across different entities. That’s organizational technology, and although it’s very important, it affects higher education much the way it affects other organizations of comparable size.

In colleges and universities, as in other organizations, information technology can promote progress by enabling administrative processes to become more efficient and by creating diverse, flexible pathways for communication and collaboration within and across different entities. That’s organizational technology, and although it’s very important, it affects higher education much the way it affects other organizations of comparable size.

Somewhat more distinctively, information technology can become learning technology, an integral part of the teaching and learning process. Learning technology sometimes replaces traditional pedagogies and learning environments, but more often it enhances and expands them.

The basic technology and middleware infrastructure necessary to enable colleges and universities to reach, teach, and assess students appears to exist already, or will before long. This brings us to the next question: What applications turn information technology into learning technology?

To answer this, it’s useful to think about four overlapping functions of learning technology.

Amplify and Extend Traditional Pedagogies, Mechanisms, and Resources

For example, by storing and distributing materials electronically, by enabling lectures and other events to be streamed or recorded, and by providing a medium for one-to-one or collective interactions among faculty and students, IT potentially expedites and extends traditional roles and transactions. Similarly, search engines and network-accessible library and reference materials vastly increase faculty and students access. The effect, although profound, nevertheless falls short of transformational. Chairs outside faculty doors give way to “learning management systems” like Blackboard or Sakai or Moodle, wearing one’s PJs to 8am lectures gives way to watching lectures from one’s room over breakfast, and library schools become information-science schools. But the enterprise remains recognizable. Even when these mechanisms go a step further, enabling true distance education whereby students never set foot on campus (in 2011, 3.7% of all students took all their coursework through distance education), the resulting services remain recognizable. Indeed, they are often simply extensions of existing institutions’ campus programs.

For example, by storing and distributing materials electronically, by enabling lectures and other events to be streamed or recorded, and by providing a medium for one-to-one or collective interactions among faculty and students, IT potentially expedites and extends traditional roles and transactions. Similarly, search engines and network-accessible library and reference materials vastly increase faculty and students access. The effect, although profound, nevertheless falls short of transformational. Chairs outside faculty doors give way to “learning management systems” like Blackboard or Sakai or Moodle, wearing one’s PJs to 8am lectures gives way to watching lectures from one’s room over breakfast, and library schools become information-science schools. But the enterprise remains recognizable. Even when these mechanisms go a step further, enabling true distance education whereby students never set foot on campus (in 2011, 3.7% of all students took all their coursework through distance education), the resulting services remain recognizable. Indeed, they are often simply extensions of existing institutions’ campus programs.

Make Educational Events and Materials Available Outside the Original Context

For example, the Open Courseware initiative (OCW) started as publicly accessible repository of lecture notes, problem sets, and other material from MIT classes. It since has grown to include similar material from scores of other institutions worldwide. Similarly, the newer Khan Academy has collected a broad array of instructional videos on diverse topics, some from classes and some prepared especially for Khan, and made those available for anyone interested in learning the material. OCW, Khan, and initiatives like them provide instructional material in pure form, rather than as part of curricula or degree programs.

For example, the Open Courseware initiative (OCW) started as publicly accessible repository of lecture notes, problem sets, and other material from MIT classes. It since has grown to include similar material from scores of other institutions worldwide. Similarly, the newer Khan Academy has collected a broad array of instructional videos on diverse topics, some from classes and some prepared especially for Khan, and made those available for anyone interested in learning the material. OCW, Khan, and initiatives like them provide instructional material in pure form, rather than as part of curricula or degree programs.

Enable Experience-Based Learning

This most productively involves experience that otherwise might have been unaffordable, dangerous, or otherwise infeasible. Simulated chemistry laboratories and factories were an early example – students could learn to synthesize acetylene by trial and error without blowing up the laboratory, or to fine-tune just-in-time production processes without bankrupting real manufacturers. As computers have become more powerful, so have simulations become more complex and realistic. As simulations have moved to cloud-based servers, multi-user virtual environments have emerged, which go beyond simulation to replicate complex environments. Experiences like these were impossible to provide before the advent of powerful, inexpensive server clouds, ubiquitous networking, and graphically capable end-user devices.

This most productively involves experience that otherwise might have been unaffordable, dangerous, or otherwise infeasible. Simulated chemistry laboratories and factories were an early example – students could learn to synthesize acetylene by trial and error without blowing up the laboratory, or to fine-tune just-in-time production processes without bankrupting real manufacturers. As computers have become more powerful, so have simulations become more complex and realistic. As simulations have moved to cloud-based servers, multi-user virtual environments have emerged, which go beyond simulation to replicate complex environments. Experiences like these were impossible to provide before the advent of powerful, inexpensive server clouds, ubiquitous networking, and graphically capable end-user devices.

Replace the Didactic Classroom Experience

This is the most controversial application of learning technology – “Why do we need faculty to teach calculus on thousands of different campuses, when it can be taught online by a computer?” – but also one that drives most discussion of how technology might transform higher education. It has emerged especially for disciplines and topics where instructors convey what they know to students through classroom lectures, readings, and tutorials. PLATO (Programmed Logic for Automated Teaching Operations) emerged from the University of Illinois in the 1960s as the first major example of computers replacing teachers, and has been followed by myriad attempts, some more successful than others, to create technology-based teaching mechanisms that tailor their instruction to how quickly students master material. (PLATO’s other major innovation was partnership with a commercial vendor, the now defunct Control Data Corporation.)

This is the most controversial application of learning technology – “Why do we need faculty to teach calculus on thousands of different campuses, when it can be taught online by a computer?” – but also one that drives most discussion of how technology might transform higher education. It has emerged especially for disciplines and topics where instructors convey what they know to students through classroom lectures, readings, and tutorials. PLATO (Programmed Logic for Automated Teaching Operations) emerged from the University of Illinois in the 1960s as the first major example of computers replacing teachers, and has been followed by myriad attempts, some more successful than others, to create technology-based teaching mechanisms that tailor their instruction to how quickly students master material. (PLATO’s other major innovation was partnership with a commercial vendor, the now defunct Control Data Corporation.)

Higher Education

We now come to the $64 question: what role might trends in higher-education learning technology play in the potential transformation of higher education?

The transformational goal for higher education is to carry out its social and economic roles with greater efficiency and within the resource constraints. Many believe that such transformation requires a very different structure for future higher education. What might that structure be, and what role might information technologies play in its development?

The fundamental purpose of higher education is to advance society, polity, and the economy by increasing the social, political, and economic skills and knowledge of students – what economists call “human capital“. At the postsecondary level, education potentially augments students’ human capital four ways:

The fundamental purpose of higher education is to advance society, polity, and the economy by increasing the social, political, and economic skills and knowledge of students – what economists call “human capital“. At the postsecondary level, education potentially augments students’ human capital four ways:

- admission, which is to say declaring that a student has been chosen as somehow better qualified or more adaptable in some sense than other prospective students (this is part of Lester Thurow‘s “job queue” idea);

- instruction, including core and disciplinary curricula, the essentially unidirectional transmission of concrete knowledge through lectures, readings, and like, and also the explication and amplification of that through classroom, tutorial, and extracurricular guidance and discussion (this is what we often mean by the narrow term “teaching”);

- certification, specifically the measuring of knowledge and skill through testing and other forms of assessment; and

- socialization, specifically learning how to become an effective member of society independently of one’s origin family, through interaction with faculty and especially with other students.

Sometimes a student gets all four together. For example, MIT marked me even before I enrolled as someone likely to play a role in technology (admission), taught me a great deal about science and engineering generally, electrical engineering in particular, and their social and economic context (instruction), documented through grades based on exams, lab work, and classroom participation that I had mastered (or failed to master) what I’d been taught (certification), and immersed me in an environment wherein data-based argument and rhetoric guided and advanced organizational life, and thereby helped me understand how to work effectively within organizations, groups, and society (socialization).

Sometimes a student gets all four together. For example, MIT marked me even before I enrolled as someone likely to play a role in technology (admission), taught me a great deal about science and engineering generally, electrical engineering in particular, and their social and economic context (instruction), documented through grades based on exams, lab work, and classroom participation that I had mastered (or failed to master) what I’d been taught (certification), and immersed me in an environment wherein data-based argument and rhetoric guided and advanced organizational life, and thereby helped me understand how to work effectively within organizations, groups, and society (socialization).

Most students attend college whose admissions processes amount to open admission, or involve simple norms rather than competition. That is, anyone who meets certain standards, such as high-school completion with a given GPA or test score, is admitted. In 2010, almost half of all institutions reporting having no admissions criteria, and barely 11% accepted fewer than 1/4 of their applicants. Moreover, most students do not live on campus — in 2007-08, only 14% of undergraduates lived in college-owned housing. This means that most of higher education has limited admission and socialization effects. Therefore, for the most part higher education affects human capital through instruction and certification.

Instruction is an especially fertile domain for technological progress. This is because three trends converge around it:

Instruction is an especially fertile domain for technological progress. This is because three trends converge around it:

- ubiquitous connectivity, especially from students’ homes;

- the rapidly growing corpus of coursework offered online, either as formal credit-bearing classes or as freestanding materials from entities like OCW or Khan; and

- perhaps more speculative) the growing willingness of institutions to grant credit and allow students to satisfy requirements through classes taken at other institutions or through some kind of testing or assessment.

Indeed, we can imagine a future where it becomes commonplace for students to satisfy one institution’s degree requirements with coursework from many other institutions. Further down this road, we can imagine there might be institutions that admit students, prescribe curriculum, certify progress, and grant degrees – but have no instructional faculty and do not offer courses. This, in turn, might spawn purely instructional institutions.

One problem with such a future is that socialization, a key function of higher education, gets lost. This points the way to one major technology challenge for the future: Developing online mechanisms, for students who are scattered across the nation or the world, that provide something akin to rich classroom and campus interaction. Such interaction is central to the success of, for example, elite liberal-arts colleges and major residential universities. Many advocates of distance education believe that social media such as Facebook groups can provide this socialization, but that potential has yet to be realized.

One problem with such a future is that socialization, a key function of higher education, gets lost. This points the way to one major technology challenge for the future: Developing online mechanisms, for students who are scattered across the nation or the world, that provide something akin to rich classroom and campus interaction. Such interaction is central to the success of, for example, elite liberal-arts colleges and major residential universities. Many advocates of distance education believe that social media such as Facebook groups can provide this socialization, but that potential has yet to be realized.

A second problem with such a future is that robust, flexible methods for assessing student learning at a distance remain either expensive or insufficient. For example, ProctorU and Kryterion are two of several commercial entities that provide remote exam proctoring, but they do so through somewhat intensive use of video observation, and that only works for rather traditional written exams. For another example, in the aftermath of 9/11 many universities figured out how to conduct doctoral thesis defenses using high-bandwidth videoconferencing facilities rather than flying in faculty from other institutions, but this simply reduced travel expense rather than changed the basic idea that several faculty members would examine one student at a time.

Millinocket

If learning technologies are to transform higher education, we must exploit opportunities and address problems. At the same time, transformed higher education cannot neglect important dimensions of human capital. In that respect, our goal should be not only to make higher education more efficient than it is today, but also better.

Drivers headed for Millinocket rarely pull over any more to ask directions of drawling downeasters. Instead, they rely on the geographic position and information systems built into their cars or phones or computers, which in turn rely on network connectivity to keep maps and traffic reports up to date. To be sure, reliance on GPS and GIS tends to insulate drivers from interaction with the diversity they pass along the road, much as Interstate highways standardized cross-country travel. So the gain from those applications is not without cost.

Drivers headed for Millinocket rarely pull over any more to ask directions of drawling downeasters. Instead, they rely on the geographic position and information systems built into their cars or phones or computers, which in turn rely on network connectivity to keep maps and traffic reports up to date. To be sure, reliance on GPS and GIS tends to insulate drivers from interaction with the diversity they pass along the road, much as Interstate highways standardized cross-country travel. So the gain from those applications is not without cost.

The same is true for learning technology: it will yield both gains and losses. Effective progress will result only if we explore and understand the technologies and their applications, decide how these relate to the structure and goals of higher education, identify obstacles and remedies, and figure out how to get there from here.

Back in the 1990s, Cliff Adelman, then at the US Department of Education, did a pioneering study of student “swirl,” that is, students moving through several institutions, perhaps with work intervals along the way,before earning degrees.

Back in the 1990s, Cliff Adelman, then at the US Department of Education, did a pioneering study of student “swirl,” that is, students moving through several institutions, perhaps with work intervals along the way,before earning degrees. At first this didn’t work: fathom.com, for example, a collaboration among several first-tier research universities led by Columbia, found no market for its high-quality online offerings. (Its Executive Director has just written a thoughtful essay on MOOCs, drawing on her fathom.com experience.)

At first this didn’t work: fathom.com, for example, a collaboration among several first-tier research universities led by Columbia, found no market for its high-quality online offerings. (Its Executive Director has just written a thoughtful essay on MOOCs, drawing on her fathom.com experience.) As recently as ten years ago, most campus IT services, everything from administrative systems through messaging and telephone systems to research technologies, were provided by campus entities using campus-based facilities, sometimes centralized and sometimes not. The same was true for the wired and then wireless networks that provided access to services, and for the desktop and laptop computers faculty, students, and staff used.

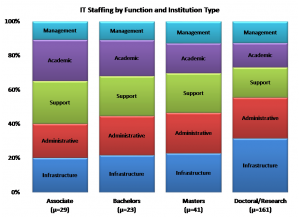

As recently as ten years ago, most campus IT services, everything from administrative systems through messaging and telephone systems to research technologies, were provided by campus entities using campus-based facilities, sometimes centralized and sometimes not. The same was true for the wired and then wireless networks that provided access to services, and for the desktop and laptop computers faculty, students, and staff used. As I wrote in an earlier post about “Enterprise IT,” the scale of enterprise infrastructure and services within IT and the shift in their locus of control have major implications for and the organizations that have provided it. Campus IT organizations grew up around locally-designed services running on campus-owned equipment managed by internal staff. Organization, staffing, and even funding models ensued accordingly. Even in academic computing and user support, “heavy metal” experience was valued highly. The shifting locus of control makes other skills at least as valuable: the ability to negotiate with suppliers, to engage effectively with customers (indeed, to think of them as “customers” rather than “users”), to manage spending and investments under constraint, to explain.

As I wrote in an earlier post about “Enterprise IT,” the scale of enterprise infrastructure and services within IT and the shift in their locus of control have major implications for and the organizations that have provided it. Campus IT organizations grew up around locally-designed services running on campus-owned equipment managed by internal staff. Organization, staffing, and even funding models ensued accordingly. Even in academic computing and user support, “heavy metal” experience was valued highly. The shifting locus of control makes other skills at least as valuable: the ability to negotiate with suppliers, to engage effectively with customers (indeed, to think of them as “customers” rather than “users”), to manage spending and investments under constraint, to explain. To the extent the rock of e-learning and the hard place of enterprise IT frame our future, we not only need to rethink our organizations and what they do; we also need to rethink how we prepare, promote, and choose leaders for higher-education leaders on campus and elsewhere—the topic, fortuitously, of a recent ECAR report, and of widespread rethinking within EDUCAUSE.

To the extent the rock of e-learning and the hard place of enterprise IT frame our future, we not only need to rethink our organizations and what they do; we also need to rethink how we prepare, promote, and choose leaders for higher-education leaders on campus and elsewhere—the topic, fortuitously, of a recent ECAR report, and of widespread rethinking within EDUCAUSE.

Today, for example, the rapidly growing capability of small smartphones has taxed previously underused cellular networks. Earlier, excess capability in the wired Internet prompted innovation in major services like

Today, for example, the rapidly growing capability of small smartphones has taxed previously underused cellular networks. Earlier, excess capability in the wired Internet prompted innovation in major services like  Progress, convergence, and integration in information technology have driven dramatic and fundamental change in the information technologies faculty, students, colleges, and universities have. That progress is likely to continue.

Progress, convergence, and integration in information technology have driven dramatic and fundamental change in the information technologies faculty, students, colleges, and universities have. That progress is likely to continue. Everyone – or at least everyone between the ages of, say, 12 and 65 – has at least one authenticated online

Everyone – or at least everyone between the ages of, say, 12 and 65 – has at least one authenticated online  It’s striking how many of these assumptions were invalid even as recently as five years ago. Most of the assumptions were invalid a decade before that (and it’s sobering to remember that the “3M” workstation was a lofty goal as recently as 1980 and cost nearly $10,000 in the mid-1980s, yet today’s

It’s striking how many of these assumptions were invalid even as recently as five years ago. Most of the assumptions were invalid a decade before that (and it’s sobering to remember that the “3M” workstation was a lofty goal as recently as 1980 and cost nearly $10,000 in the mid-1980s, yet today’s  In colleges and universities, as in other organizations, information technology can promote progress by enabling administrative processes to become more efficient and by creating diverse, flexible pathways for communication and collaboration within and across different entities. That’s organizational technology, and although it’s very important, it affects higher education much the way it affects other organizations of comparable size.

In colleges and universities, as in other organizations, information technology can promote progress by enabling administrative processes to become more efficient and by creating diverse, flexible pathways for communication and collaboration within and across different entities. That’s organizational technology, and although it’s very important, it affects higher education much the way it affects other organizations of comparable size. For example, by storing and distributing materials electronically, by enabling lectures and other events to be streamed or recorded, and by providing a medium for one-to-one or collective interactions among faculty and students, IT potentially expedites and extends traditional roles and transactions. Similarly, search engines and network-accessible library and reference materials vastly increase faculty and students access. The effect, although profound, nevertheless falls short of transformational. Chairs outside faculty doors give way to “learning management systems” like

For example, by storing and distributing materials electronically, by enabling lectures and other events to be streamed or recorded, and by providing a medium for one-to-one or collective interactions among faculty and students, IT potentially expedites and extends traditional roles and transactions. Similarly, search engines and network-accessible library and reference materials vastly increase faculty and students access. The effect, although profound, nevertheless falls short of transformational. Chairs outside faculty doors give way to “learning management systems” like  For example, the

For example, the  This most productively involves experience that otherwise might have been unaffordable, dangerous, or otherwise infeasible. Simulated chemistry laboratories and factories were an early example – students could learn to

This most productively involves experience that otherwise might have been unaffordable, dangerous, or otherwise infeasible. Simulated chemistry laboratories and factories were an early example – students could learn to  This is the most controversial application of learning technology – “Why do we need faculty to teach calculus on thousands of different campuses, when it can be taught online by a computer?” – but also one that drives most discussion of how technology might transform higher education. It has emerged especially for disciplines and topics where instructors convey what they know to students through classroom lectures, readings, and tutorials. PLATO (Programmed Logic for Automated Teaching Operations) emerged from the

This is the most controversial application of learning technology – “Why do we need faculty to teach calculus on thousands of different campuses, when it can be taught online by a computer?” – but also one that drives most discussion of how technology might transform higher education. It has emerged especially for disciplines and topics where instructors convey what they know to students through classroom lectures, readings, and tutorials. PLATO (Programmed Logic for Automated Teaching Operations) emerged from the  Sometimes a student gets all four together. For example, MIT marked me even before I enrolled as someone likely to play a role in technology (admission), taught me a great deal about science and engineering generally, electrical engineering in particular, and their social and economic context (instruction), documented through grades based on exams, lab work, and classroom participation that I had mastered (or failed to master) what I’d been taught (certification), and immersed me in an environment wherein data-based argument and rhetoric guided and advanced organizational life, and thereby helped me understand how to work effectively within organizations, groups, and society (socialization).

Sometimes a student gets all four together. For example, MIT marked me even before I enrolled as someone likely to play a role in technology (admission), taught me a great deal about science and engineering generally, electrical engineering in particular, and their social and economic context (instruction), documented through grades based on exams, lab work, and classroom participation that I had mastered (or failed to master) what I’d been taught (certification), and immersed me in an environment wherein data-based argument and rhetoric guided and advanced organizational life, and thereby helped me understand how to work effectively within organizations, groups, and society (socialization). Instruction is an especially fertile domain for technological progress. This is because three trends converge around it:

Instruction is an especially fertile domain for technological progress. This is because three trends converge around it: One problem with such a future is that socialization, a key function of higher education, gets lost. This points the way to one major technology challenge for the future: Developing online mechanisms, for students who are scattered across the nation or the world, that provide something akin to rich classroom and campus interaction. Such interaction is central to the success of, for example, elite liberal-arts colleges and major residential universities. Many advocates of distance education believe that social media such as Facebook groups can provide this socialization, but that potential has yet to be realized.

One problem with such a future is that socialization, a key function of higher education, gets lost. This points the way to one major technology challenge for the future: Developing online mechanisms, for students who are scattered across the nation or the world, that provide something akin to rich classroom and campus interaction. Such interaction is central to the success of, for example, elite liberal-arts colleges and major residential universities. Many advocates of distance education believe that social media such as Facebook groups can provide this socialization, but that potential has yet to be realized. Drivers headed for

Drivers headed for

“There are two possible solutions,” Hercule Poirot says to the assembled suspects in Murder on the Orient Express (that’s p. 304 in

“There are two possible solutions,” Hercule Poirot says to the assembled suspects in Murder on the Orient Express (that’s p. 304 in  The straightforward projection, analogous to Poirot’s simpler solution (an unknown stranger committed the crime, and escaped undetected), stems from projections how institutions themselves might address each of the IT domains as new services and devices become available, especially cloud-based services and consumer-based end-user devices. The core assumptions are that the important loci of decisions are intra-institutional, and that institutions make their own choices to maximize local benefit (or, in the economic terms I mentioned in

The straightforward projection, analogous to Poirot’s simpler solution (an unknown stranger committed the crime, and escaped undetected), stems from projections how institutions themselves might address each of the IT domains as new services and devices become available, especially cloud-based services and consumer-based end-user devices. The core assumptions are that the important loci of decisions are intra-institutional, and that institutions make their own choices to maximize local benefit (or, in the economic terms I mentioned in  One clear consequence of such straightforward evolution is a continuing need for central guidance and management across essentially the current array of IT domains. As I tried to suggest in

One clear consequence of such straightforward evolution is a continuing need for central guidance and management across essentially the current array of IT domains. As I tried to suggest in  If we think about the future unconventionally (as Poirot does in his second solution — spoiler in the last section below!), a somewhat more radical, extra-institutional projection emerges. What if Accenture, McKinsey, and Bain are right, and IT contributes very little to the distinctiveness of institutions — in which case colleges and universities have no business doing IT idiosyncratically or even individually?

If we think about the future unconventionally (as Poirot does in his second solution — spoiler in the last section below!), a somewhat more radical, extra-institutional projection emerges. What if Accenture, McKinsey, and Bain are right, and IT contributes very little to the distinctiveness of institutions — in which case colleges and universities have no business doing IT idiosyncratically or even individually? Despite changes in technology and economics, and some organizational evolution, higher education remains largely hierarchical. Vertically-organized colleges and universities grant degrees based on curricula largely determined internally, curricula largely comprise courses offered by the institution, institutions hire their own faculty to teach their own courses, and students enroll as degree candidates in a particular institution to take the courses that institution offers and thereby earn degrees. As

Despite changes in technology and economics, and some organizational evolution, higher education remains largely hierarchical. Vertically-organized colleges and universities grant degrees based on curricula largely determined internally, curricula largely comprise courses offered by the institution, institutions hire their own faculty to teach their own courses, and students enroll as degree candidates in a particular institution to take the courses that institution offers and thereby earn degrees. As  The first challenge, which is already being widely addressed in colleges, universities, and other entities, is distance education: how to deliver instruction and promote learning effectively at a distance. Some efforts to address this challenge involve extrapolating from current models (many community colleges, “laptop colleges”, and for-profit institutions are examples of this), some involve recycling existing materials (Open CourseWare, and to a large extent the Khan Academy), and some involve experimenting with radically different approaches such as game-based simulation. There has already been considerable success with effective distance education, and more seems likely in the near future.

The first challenge, which is already being widely addressed in colleges, universities, and other entities, is distance education: how to deliver instruction and promote learning effectively at a distance. Some efforts to address this challenge involve extrapolating from current models (many community colleges, “laptop colleges”, and for-profit institutions are examples of this), some involve recycling existing materials (Open CourseWare, and to a large extent the Khan Academy), and some involve experimenting with radically different approaches such as game-based simulation. There has already been considerable success with effective distance education, and more seems likely in the near future. As courses relate to curricula without depending on a particular institution, it becomes possible to imagine divorcing the offering of courses from the awarding of degrees. In this radical, no-longer-vertical future, some institutions might simply sell instruction and other learning resources, while others might concentrate on admitting students to candidacy, vetting their choices of and progress through coursework offered by other institutions, and awarding degrees. (Of course, some might try to continue both instructing and certifying.) To manage all this, it will clearly be necessary to gather, hold, and appraise student records in some shared or central fashion.

As courses relate to curricula without depending on a particular institution, it becomes possible to imagine divorcing the offering of courses from the awarding of degrees. In this radical, no-longer-vertical future, some institutions might simply sell instruction and other learning resources, while others might concentrate on admitting students to candidacy, vetting their choices of and progress through coursework offered by other institutions, and awarding degrees. (Of course, some might try to continue both instructing and certifying.) To manage all this, it will clearly be necessary to gather, hold, and appraise student records in some shared or central fashion. Poirot’s second solution to the Ratchett murder (everyone including the butler did it) requires astonishing and improbable synchronicity among a large number of widely dispersed individuals. That’s fine for a mystery novel, but rarely works out in real life.

Poirot’s second solution to the Ratchett murder (everyone including the butler did it) requires astonishing and improbable synchronicity among a large number of widely dispersed individuals. That’s fine for a mystery novel, but rarely works out in real life.