(Scientific) Knowledge Discovery in Open Networked Environments: Some Policy Issues

(This is an edited version of comments from “The Future of Scientific Knowledge Discovery in Open Networked Environments: A National Symposium and Workshop” held in Washington, DC, March 10-11, 2011 under the auspices of the National Academy of Sciences Board on Research Data and Information and Computer Science and Telecommunications Board. The presentation slides can be found at http://gregj.us/oc9AtI)

First, a bit of personal history. The Coleman Report, released in 1966, was one of the first big studies of American elementary and secondary education, and especially of equality therein.

Some years after the initial report, I was working in a group that was reanalyzing data from the Coleman study. We had one of what we were told were two remaining copies of a dataset that had been collected as part of that study but had never been used. It was a 12-inch reel of half-inch magnetic tape containing the Coleman “Principals” data, which derived from a survey of principals in high schools and elementary schools.

Some years after the initial report, I was working in a group that was reanalyzing data from the Coleman study. We had one of what we were told were two remaining copies of a dataset that had been collected as part of that study but had never been used. It was a 12-inch reel of half-inch magnetic tape containing the Coleman “Principals” data, which derived from a survey of principals in high schools and elementary schools.

The first challenge was to decipher the tape itself, which meant, as contemporaries among you may recall, trying every possible combination of labeling protocol, track count, and parity bit until one of them yielded data rather than gibberish. Once we did that – the tape turned out to be seven track, even parity, unlabeled – the next challenge was to make sure the codebooks (which gave the data layout – the schema, in modern terms – but not the original questions or choice sets) matched what was on the tape. By the time we did all that, we had decided that the Principals data weren’t all that relevant to our work, and so we put the analysis and the tape aside.

The 12-inch reel of tape kept moving with me and my garage. Eventually we sold our house in Arlington, Massachusetts, and moved to Lexington. I had to clear out the garage, and there seemed no more reason to keep the tape than various boxes of old Hollerith cards from my dissertation, and so off they went to a dumpster.

Unfortunately, apparently what I’d had was actually the last remaining copy of the Principals data. The other so-called “original” copy had been discarded on the assumption that our research group would keep the tape. That illustrates what can happen with the LOCKSS strategy (lots of copies keeps stuff safe) strategy for data preservation: if everybody thinks somebody else is keeping a copy, then LOCKSS does not work terribly well.

Many of us who work in the social sciences, particularly at the policy end of things, never gather our own data. The research we do is almost always based on data that were collected by someone else but typically not analyzed by them. This notion that data collectors should be separate from data analysts is actually very well established and routine in my fields of work.

The Coleman work came early in my doctoral studies. Most of my work on research projects back then (at the Huron Institute, the Center for the Study of Public Policy, and Harvard’s Administration, Planning, and Social Policy programs in education) involved secondary analysis. Later, when it came time to do my own research, I used a large secondary dataset from the National Longitudinal Study of the High School Class of 1972 (usually called NLS72 for short). This study went on for years and years and years, and, as you can see, is now this huge longitudinal array of data.

The Coleman work came early in my doctoral studies. Most of my work on research projects back then (at the Huron Institute, the Center for the Study of Public Policy, and Harvard’s Administration, Planning, and Social Policy programs in education) involved secondary analysis. Later, when it came time to do my own research, I used a large secondary dataset from the National Longitudinal Study of the High School Class of 1972 (usually called NLS72 for short). This study went on for years and years and years, and, as you can see, is now this huge longitudinal array of data.

My research question, based on the first NLS72 followup, was whether financial aid made any difference in kids’ decisions whether to enter college. The answer is “yes”, but the more complex question is whether that effect is big enough to matter. NLS72 taught me how important the relationship was between those who gather data and those who use it, and so it’s good to be here today to reflect on what I’ve learned since then.

My current employer, EDUCAUSE, is an association of most of the higher-education institutions in the United States and a number elsewhere. Among other things, we collect data from our members on a whole raft of questions: How much do you spend on personal computers? How many helpdesk staff do you have? To whom does the CIO report? We gather all of these data, and then our members and many other folks use the Core Data Service for all sorts of purposes.

My current employer, EDUCAUSE, is an association of most of the higher-education institutions in the United States and a number elsewhere. Among other things, we collect data from our members on a whole raft of questions: How much do you spend on personal computers? How many helpdesk staff do you have? To whom does the CIO report? We gather all of these data, and then our members and many other folks use the Core Data Service for all sorts of purposes.

One of the things that struck us over time is that we get very few questions from people about what a data item actually means. Users apparently make their own assumptions about what each data data item means, and so although they are all producing research based ostensibly on the same data from the same source, because of this interpretation problem they sometimes get very different results, even if they proceed identically from a statistical point of view.

If issues like this go unexamined, then research based on secondary, “discovered” sources can be very misleading. It’s critical, in doing such analysis, to be clear about some important attributes of data that are “discovered” rather than collected directly. I want to touch quickly on five attributes of “discovered” data that warrant attention: quality, location, format, access, and support.

Quality

The classic quality problem for secondary analysis is that people use data for a given purpose without understanding that the data collection may have been inappropriate for that purpose. There are two general issues here. One has to do with very traditional measures of data quality: whether the questions were valid and reliable, what the methodology was, and other such attributes. Since that dimension of quality is well understood, I’ll say no more about it here.

The other is something most people do not usually consider a quality issue, but any archivist would tell you is absolutely critical: you have to think about where data came from and why they were gathered – their “provenance” – because why people do things makes a different in how they do them, and how people do things makes a difference in whether data are reusable.

The other is something most people do not usually consider a quality issue, but any archivist would tell you is absolutely critical: you have to think about where data came from and why they were gathered – their “provenance” – because why people do things makes a different in how they do them, and how people do things makes a difference in whether data are reusable.

One hears arguments that this is not true in the hard sciences and is completely true in the social sciences, but the reverse is equally true a great deal of the time. So the question of why someone gathered data is very important.

One key element of provenance I’ll call “primacy“, which is whether you are getting data from the people who gathered them or there have been intermediaries along the way. People often do not consider that. They say, “I’ve found some relevant data,” and that’s the end of it.

I was once assigned, as part of a huge Huron Institute review of federal programs for young children, to figure out what we knew about “latchkey” kids. These are kids who come home after school when their parents aren’t home, and let themselves in with a key (in the popular imagery of the time, with the key kept around their necks on a string). The question was, how many latchkey kids are there?

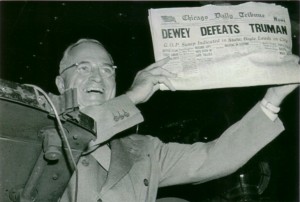

This was pre-Google, so I did a lot of library research and discovered that there were many studies attempting to answer this question. Curiously, though, all of them estimated about the same number of latchkey kids. I was intrigued by that, because I had done enough data work by then to find such consistency improbable.

I looked more deeply into the studies, figuring out where each researcher had gotten his or her data. It turned out that every last one of these studies traced to a single study, and that single study had been done in one town by someone who was arguing for a particular public policy and therefore was interested in showing that the number was relatively high. The original purpose of the data had been lost as they were reused by other researchers, and by the time I reviewed the finding, people thought that the latchkey-kid phenomenon was well and robustly understood based on multiple studies.

The same thing can happen with data mining. You can see multiple studies and think everyone has got separate data, but it turns out that everyone is using the same data. So provenance and primacy become very important issues.

Location

In many cases this turns into a financial issue: How do I get data from there to here? If the amount of data is small, the problem can be solved without tradeoffs. But for enormous collections of data — x-ray data from a satellite, for example, or even financial transaction data from supermarkets —how data get from there to here and where and how they are stored become a policy issues, because sometimes the only way to get data from source to user and to store them are by summarizing, filtering, or otherwise “cleaning” or “compressing” the data. Large datasets gathered elsewhere often are subject to such pre-processing, especially when they involve images or substantial detection noise, and this is important for the secondary analyst to know.

In many cases this turns into a financial issue: How do I get data from there to here? If the amount of data is small, the problem can be solved without tradeoffs. But for enormous collections of data — x-ray data from a satellite, for example, or even financial transaction data from supermarkets —how data get from there to here and where and how they are stored become a policy issues, because sometimes the only way to get data from source to user and to store them are by summarizing, filtering, or otherwise “cleaning” or “compressing” the data. Large datasets gathered elsewhere often are subject to such pre-processing, especially when they involve images or substantial detection noise, and this is important for the secondary analyst to know.

Constraints on data located elsewhere arise too. There may be copyright constraints: you can use the data, but you cannot keep them, or you can use them and keep them, but you cannot publish anything unless the data collector gets to see – or, worse, approve – the results. All of these things typically have to do with where the data are located, because the conditions accompany the data from where they were. Unlike the original data collector, the secondary analyst has no ability to change the conditions.

Format

There are fewer and fewer libraries that actually have working lantern slide projectors. Yet there are many lantern slides that are the only records of certain events or images. In most cases, nobody has money to digitize or otherwise preserve those slides. As is the case for lantern slides, whether data can be used depends on their format, and so format affects what data are available. There are three separate kinds of issues related to format: robustness, degradation, and description.

There are fewer and fewer libraries that actually have working lantern slide projectors. Yet there are many lantern slides that are the only records of certain events or images. In most cases, nobody has money to digitize or otherwise preserve those slides. As is the case for lantern slides, whether data can be used depends on their format, and so format affects what data are available. There are three separate kinds of issues related to format: robustness, degradation, and description.

Robustness has to do with the chain of custody, and especially with accidental or intentional changes. Part of the reason the seven-track Coleman had even parity was so that I could check each six bits of data to make sure the ones and zeroes added up to an even number. If they did not, something had happened to those six bits in transit, and the associated byte of data could not be trusted. So one question about secondary data is: Is the format robust? Does it resist change, or at least indicate it? That is, does data format stand up to the vagaries of time and of technology?

Degradation has to do with losing data over time, which happens to all data sets regardless of format. Error-correction mechanisms can sometimes restore data that have been lost, especially if multiple copies of the data exist, but none of those mechanisms is perfect. It’s important to know how data might have degraded, and especially what measures have been employed to combat or “reverse” degradation.

Finally, most data are useless without a description of the items in this dataset: not just how the data are recorded on the medium or the database schema, but also how they were measured, what the different values are, what was done when there were missing data, whether any data correction was done, and so on.

So, as a matter of policy, the “codebook” becomes an important piece of format. Sometimes codebooks come with data, but quite often one gets to the codebook by a path that is different from the one leading to the data, or has to infer what the codebook was by reading someone’s prior research. Both are dangerous. That’s what we had to do with the Coleman Principals data, for example, because all we had were summary tables. We had to deduce what the questions were and which questions had which values. It’s probably just as well we never used the data for analysis.

Access

Here two policy issues arise.

The first access issue is promotion: researchers trying to market their data. The risks in that should be obvious, and it’s important that secondary analysts seek out the best data sources rather than vice versa.

The first access issue is promotion: researchers trying to market their data. The risks in that should be obvious, and it’s important that secondary analysts seek out the best data sources rather than vice versa.

As an example, as I was preparing this presentation a furor erupted over an public-radio fundraiser who had been recorded apparently offering to tilt coverage in return for sponsorship. That’s not the data issue – rather, it was the flood of “experts” offering themselves as commentators as the media frenzy erupted. Experts were available, apparently and ironically, to document pretty much any perspective on the relationship between media funding and reporting. The experts the Chronicle of Philanthropy quoted were very different from the ones Forbes quoted.

The second issue is restriction. Some data are sensitive, and people may not want them to be seen. There are regulations and standard practices to handle this, but sometimes people go further and attempt to censor specific data values rather than restrict access. The most frequent problem is the desire on the part of data collectors to control analysis and/or publications based on their data.

Most cases, of course, lie somewhere between promotion and censorship. The key policy point is, all data flow through a process in which there may be some degree of promotion or censorship, and secondary analysts ignore that at their peril.

Support

This has become a big issue for institutions. Suppose a researcher on Campus A uses data that originated with a researcher at Campus B. A whole set of issues arises. Some of them are technical issues. Some of them are coding issues. Many of these I’ve already mentioned under Location and Format above.

A wonderful New Yorker cartoon (http://gregj.us/pFj6EN) captures the issue perfectly: a car is driving in circles in front of a garage, and one mechanic says to another, “At what point does this become our problem”?

Whatever the issues are, whom does the researcher at A approach for help? For substantive questions, the answer is often doctoral students at A, but a better answer might come from the researcher at B. For technical things, A’s central IT organization might be the better source of help, but some technical questions can only be solved with guidance from the originator at B. Is support for secondary analysis an institutional role, or the researcher’s responsibility? That is, do all costs of research flow to the principal investigator, or are they part of central research infrastructure? In either case, does the responsibility for support lie with the originator – B – or with the secondary researcher? These questions often get answered financially rather than substantively, to the detriment of data quality.

When data collection carries a requirement that access to the data be preserved for some period beyond the research project, a second support question arises. I spoke with someone earlier at this meeting, for example, about the problem of faculty moving from institution to institution. Suppose that a faculty member comes to campus C and gets an NSF grant. The grant is to the institution. The researcher gathers some data, does his or her own analysis, publishes, and becomes famous. Fame has its rewards: Campus D makes an offer too good to refuse, off the faculty member goes, and now Campus C is holding the bag for providing access to the data to another researcher, at Campus E. The original principal investigator is gone, and NSF probably has redirected the funds to Campus D, so C is now paying the costs of serving E’s researcher out of C’s own funds. There’s no good answer to this one, and most of the regulations that cause the problem pretend it doesn’t exist.

A Caution about Cautions

Let me conclude by citing a favorite Frazz comic, which I don’t have permission to reproduce here – Frazz is a great strip, a worthy successor to the legendary Calvin and Hobbes. Frazz (he’s a renaissance man, an avid runner who works as a school janitor) starts reading directions: “Do not use in the bathtub”. Caulfield (a student, Frazz’s protégé, quite possibly Calvin grown up a bit) reads on: “Nor while operating a motor vehicle.” They continue reading: “And not to be used near a fire extinguisher, not recommended for unclogging plumbing, and you do not stick it in your ear and turn it on.”

Finally Caulfield says, “Okay, I think we are good,” and he puts on his helmet. Then the principal, watching the kid, who is wearing skates and about to try rocketing himself down an iced-over sidewalk by pointing a leaf blower backwards and turning it on, says “This cannot be a good idea.” To which Frazz replies, “When the warnings are that comprehensive, the implication is that they are complete.”

If there is a warning about policy advice, it is that the list I just gave cannot possibly be complete. The kinds of things we have talked about today require constant thought!